Best AI Model for OCR in 2026: Frontier LLMs vs Specialized Vision Models

TL;DR — For OCR in 2026, no single model wins everything. Gemini 3.1 Pro is the best frontier LLM for volume document processing (1M context, lowest cost). Claude Opus 4.6 leads on complex structured extraction where accuracy matters more than throughput. Specialized models like GLM-OCR and PaddleOCR-VL beat all frontier LLMs on raw OCR benchmarks. The practical move: test multiple models on your actual documents through a unified API like ofox.ai before committing.

OCR Stopped Being a Solved Problem

For years, OCR was considered boring infrastructure. Tesseract handled most things, Google Cloud Vision covered the rest, and nobody thought much about it.

Then vision-language models showed up and changed the question. OCR is no longer just about reading text from images. The real challenge now is understanding documents — extracting tables with correct cell relationships, parsing handwritten notes alongside printed text, pulling structured data from invoices that all look different, and making sense of charts embedded in financial reports.

That shift turned OCR from a commodity feature into a genuine model selection problem. The model you pick determines not just whether you can read the text, but whether you can do anything useful with it.

The Benchmark Picture

OmniDocBench has become the standard benchmark for document AI in 2026, covering text extraction, table parsing, formula recognition, and complex layout understanding. Here are the current scores:

| Model | OmniDocBench Score | Type | Context Window |

|---|---|---|---|

| GLM-OCR | 94.62 | Specialized (0.9B) | — |

| PaddleOCR-VL | 94.50 | Specialized (open source) | — |

| Gemini 3.1 Pro | ~90.3 | Frontier LLM | 1,000,000 |

| GPT-5.4 | ~85.8 | Frontier LLM | 1,050,000 |

| Claude Opus 4.6 | — | Frontier LLM | 200,000 |

Scores from OmniDocBench V1.5 leaderboard, as of April 2026. Claude scores not publicly reported on this benchmark.

The obvious takeaway: specialized models crush frontier LLMs on pure document parsing. GLM-OCR, a 0.9-billion-parameter model, outscores Gemini 3.1 Pro by over 4 points. Focus wins over generality when the task is narrow.

Among frontier LLMs, the gap is real too. Gemini 3.1 Pro leads GPT-5.4 by a comfortable margin. Google’s native multimodal training pays off on vision tasks.

These are benchmark numbers, though. Production OCR throws messier inputs at you — ambiguous layouts, coffee-stained receipts, downstream reasoning that synthetic tests don’t capture. Frontier models earn their cost on those edges.

Gemini 3.1 Pro: The Volume Play

If your OCR workload involves processing thousands of documents daily, Gemini 3.1 Pro is the frontrunner among general-purpose models.

The 1M-token context window matters most here. A typical scanned page consumes around 700-1,500 tokens as image input. You can feed 50+ pages into a single API call and ask the model to extract, cross-reference, and summarize across all of them. Try that with a 200K-context model and you hit the ceiling on anything longer than a short contract.

Google has also been aggressive on pricing. At roughly $1.25 per million input tokens through ofox.ai, processing a 20-page document costs a fraction of a cent. For comparison, the same pages through Claude Opus 4.6 run 12x more per input token. When you’re processing invoices, receipts, or compliance documents at enterprise scale, that multiplier compounds fast.

Where Gemini leads:

- Batch processing of multi-page scanned documents

- Mixed-layout pages (tables + text + images on the same page)

- High-resolution image understanding with tunable visual token usage

- Handwriting recognition — noticeably better than GPT-5.4 on cursive

Where it falls short:

- Complex nested table extraction (Claude handles these more reliably)

- Following highly specific output format instructions under pressure

- Nuanced reasoning about ambiguous document content

For a deeper look at Gemini 3.1 Pro’s full capabilities and pricing, see our complete Gemini 3.1 Pro API guide.

Claude Opus 4.6: The Precision Instrument

Claude approaches OCR differently than Gemini. It’s not the fastest or cheapest option for bulk extraction. What it does better than anything else on the market is understand documents — not just read them.

Picture a messy insurance claim form: handwritten annotations over printed fields, a stapled receipt, a signature block. Ask Claude to return clean JSON with every field labeled. The output consistency across hundreds of such documents is where Claude separates from the pack.

This has less to do with raw OCR scores and more with instruction-following under complexity. The same capability that makes Claude the go-to model for coding and reasoning translates directly to structured document extraction.

Where Claude leads:

- Complex structured extraction (nested tables, multi-level forms)

- Documents requiring both extraction and reasoning (legal contracts, medical records)

- Following detailed output schemas consistently

- Handling ambiguous or low-quality scans where context clues matter

Where it falls short:

- Cost per page is substantially higher than alternatives

- 200K context window limits batch processing of long documents

- Not ideal for simple text extraction where a cheaper model handles the job

The premium makes sense in specific scenarios: legal discovery where missing a clause costs real money, healthcare data extraction where accuracy has compliance implications, or financial document processing where table structures contain the actual meaning.

GPT-5.4: The Middle Ground

GPT-5.4 sits between Gemini and Claude on most OCR dimensions. Not the cheapest, not the most accurate on benchmarks, but the most versatile.

GPT-5.4 has the widest tooling ecosystem. If your OCR pipeline feeds extracted data into function calls, triggers downstream actions, or plugs into existing OpenAI-based workflows, GPT is the simplest integration. JSON mode works reliably, and building extraction pipelines with consistent output schemas is straightforward.

The 1M-token context window (matching Gemini) means batch processing isn’t an issue. And GPT-5.4’s general reasoning quality is strong enough that it handles most document understanding tasks competently, even if it’s rarely the absolute best at any single one.

Where GPT-5.4 fits:

- Mixed workloads where OCR is one step in a larger pipeline

- Teams already invested in the OpenAI ecosystem

- Applications needing fast structured output with tool calling

- General-purpose document understanding without extreme accuracy requirements

When Specialized OCR Models Win

On pure document parsing, purpose-built models win. No way around it.

GLM-OCR (0.9B parameters) tops OmniDocBench at 94.62 — beating every frontier model by a meaningful margin. It handles formulas, tables, and complex layouts with an accuracy that comes from being trained specifically for document understanding. The 0.9B parameter count means it runs on modest hardware.

PaddleOCR-VL scores 94.50 and is fully open source. A recent analysis found that self-hosted VLM-based OCR pipelines are roughly 167x cheaper per page than commercial vision API calls. For teams processing millions of pages monthly, that’s the difference between a manageable infrastructure cost and a staggering API bill.

Mistral OCR entered the space as a dedicated document AI model from Mistral, targeting enterprise document processing with fast inference and strong multilingual support.

The split is simple. Need to read documents? Specialized models give you the best accuracy at the lowest cost. Need to read and then reason about what’s in them? Frontier LLMs justify the premium by combining extraction with understanding.

Most production pipelines end up using both: a specialized model for bulk extraction, with a frontier LLM handling documents that need deeper analysis. API gateway platforms make that kind of routing easy to set up.

Choosing by Use Case

It depends on what you’re processing. Here’s a cheat sheet:

| Use Case | Recommended Model | Why |

|---|---|---|

| Invoice processing at scale | Gemini 3.1 Pro | Best cost-to-accuracy ratio, handles varied layouts |

| Legal contract extraction | Claude Opus 4.6 | Highest accuracy on complex nested structures |

| Receipt scanning (mobile app) | Gemini 3.1 Flash | Fast, cheap, good enough for clean printed text |

| Medical records digitization | Claude Opus 4.6 | Precision matters, compliance requirements |

| Academic paper parsing | GLM-OCR (self-hosted) | Best on formulas and mixed scientific layouts |

| Multilingual document processing | Gemini 3.1 Pro | Strong cross-language support, large context |

| General-purpose with downstream automation | GPT-5.4 | Best tool calling and structured output pipeline |

| Archival handwriting digitization | Gemini 3.1 Pro | Strongest handwriting recognition among frontier LLMs |

For many teams, the right answer isn’t a single model — it’s testing two or three on representative samples of their actual documents. A model that scores 94 on benchmarks might score 80 on your specific invoice format if the layout happens to hit a blind spot.

Testing Multiple Models Without the Setup Tax

The fastest way to compare: one API endpoint that gives you access to all models.

ofox.ai exposes Gemini 3.1 Pro, Claude Opus 4.6, GPT-5.4, and 100+ other models through one OpenAI-compatible API. Send the same document image to three models by changing one parameter. No separate accounts, no separate SDKs, no billing fragmentation.

A quick comparison setup looks like this:

base_url: https://api.ofox.ai/v1

models: ["gemini-3.1-pro", "claude-opus-4-6", "gpt-5.4"]Run the same extraction prompt against each model, compare the outputs, and pick the winner for your specific document type. The pay-as-you-go pricing means you’re only charged for what you actually use during testing — no commitments, no minimums.

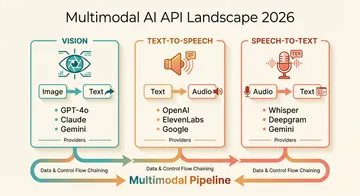

For a broader look at how models compare beyond OCR, our 2026 AI model comparison guide covers reasoning, coding, and writing quality across the same frontier models. And if you’re also processing audio or generating speech alongside document work, the multimodal API guide covers the full stack.

Where This Is Heading

Specialized models are starting to add reasoning on top of extraction. GLM-OCR already does basic document understanding alongside its OCR work. As these models mature, the cost gap with frontier LLMs gets harder to justify for most workflows.

On the other side, frontier LLMs are getting smarter about documents. Gemini’s tunable visual token feature lets you dial accuracy against cost per page. Claude and GPT will follow.

The production pattern settling in for 2026: route simple extraction to cheap models, escalate complex documents to frontier LLMs. API aggregation platforms handle the routing.

Whatever model works best for your documents today will probably be second-best in six months. Build on a flexible API layer and you won’t have to rewrite your pipeline every time the leaderboard shifts.