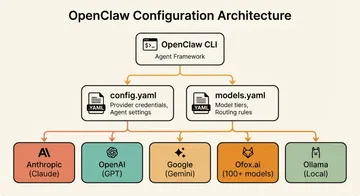

Cherry Studio Custom API Setup: Connect GPT, Claude & Gemini Through One Provider

Summary

Cherry Studio is a cross-platform AI desktop client that supports multi-model conversations, knowledge bases, and workflows. By connecting it to OfoxAI as a custom API provider, you get access to GPT, Claude, Gemini, Qwen, and dozens of other models from a single interface with one API key. This guide covers both the OpenAI-compatible and Anthropic protocol setups, multi-model comparison, knowledge base configuration with RAG, and troubleshooting.

For the official integration reference, see the OfoxAI Cherry Studio documentation.

What Is Cherry Studio

Cherry Studio is an open-source AI chat client built for users who want control over which models they use and how they access them. It supports custom API endpoints, multi-model conversations, a built-in knowledge base with RAG, and local data storage across Windows, macOS, and Linux.

Key features relevant to this guide:

- Custom API providers with OpenAI-compatible and Anthropic protocol support

- Multi-model comparison to evaluate different models side by side

- Knowledge base with document parsing and embeddings-based retrieval

- All data stored locally on your machine

Why Use a Custom API Provider

Cherry Studio can connect directly to individual providers, but using a custom API provider like OfoxAI has practical advantages:

Access more models with one API key. Instead of managing separate accounts with OpenAI, Anthropic, and Google, you get access to GPT, Claude, Gemini, Qwen, and other models through a single endpoint.

Unified billing. One invoice, one usage dashboard, one balance to track.

Consistent interface. All models follow the same API format, so switching between them requires no configuration changes beyond selecting a different model ID.

Download and Install

Go to the Cherry Studio website to download the client for your platform (Windows, macOS, or Linux).

OpenAI-Compatible Protocol Setup

The OpenAI-compatible protocol is the most versatile option. It supports all OfoxAI models and is the most universal integration method.

Step 1: Open Provider Settings

Launch Cherry Studio and navigate to Settings > Model Service. Click Add to create a new provider.

Step 2: Enter API Configuration

Fill in the following fields:

| Setting | Value |

|---|---|

| Name | OfoxAI (OpenAI) |

| API Type | OpenAI |

| API URL | https://api.ofox.ai/v1 |

| API Key | Your OfoxAI API Key |

Step 3: Add Models Manually

Cherry Studio requires manually adding model names. It does not auto-load the model list from custom providers. Add the model IDs you want to use:

openai/gpt-4o

openai/gpt-4o-mini

anthropic/claude-sonnet-4.6

anthropic/claude-opus-4.6

google/gemini-3.1-flash-lite-preview

google/gemini-3.1-pro-preview

qwen/qwen-maxThe model ID format is provider/model-name. You can add more models from the Model Catalog at any time.

Step 4: Test the Connection

Toggle the provider on and send a test message. If you receive a response, the setup is working.

Anthropic Protocol Setup

If you primarily use Claude models and want access to Anthropic-specific features, Cherry Studio also supports the native Anthropic protocol. This is the better choice when you need features like cache_control (for prompt caching) and extended thinking (for complex reasoning tasks).

Step 1: Add Anthropic Provider

In Settings > Model Service, click Add to create a new provider and select the Anthropic API type.

Step 2: Enter API Configuration

| Setting | Value |

|---|---|

| Name | OfoxAI (Anthropic) |

| API Type | Anthropic |

| API URL | https://api.ofox.ai/anthropic |

| API Key | Your OfoxAI API Key |

Step 3: Add Claude Models

Add the Claude model IDs:

anthropic/claude-opus-4.6

anthropic/claude-sonnet-4.6

anthropic/claude-haiku-4.5Step 4: Enable and Test

Toggle the provider on and send a test message. Anthropic protocol features like cache_control and extended thinking work natively through this configuration.

Multi-Model Comparison

One of Cherry Studio’s standout features is comparing multiple models side by side. This is valuable for evaluating response quality across different model families, cross-checking factual claims, or finding the best model for a specific task.

To use it:

- Select multiple models in the chat interface

- Send your question

- View and compare responses from each model

A practical workflow: configure both the OpenAI-compatible provider (for GPT and Gemini models) and the Anthropic provider (for Claude models), then compare responses across all three families in one conversation.

Knowledge Base and RAG Setup

Cherry Studio includes a built-in knowledge base that uses Retrieval-Augmented Generation (RAG) to let models reference your documents when answering questions. This requires an embeddings model.

Cherry Studio’s knowledge base feature requires the Embeddings API. OfoxAI supports embedding models like openai/text-embedding-3-small.

Set Up the Knowledge Base

- Create a knowledge base and upload documents

- Enable the knowledge base in conversations

- The model will answer based on your documents, grounding responses in your actual data rather than relying solely on training knowledge

Recommended Models

| Use Case | Model ID | Protocol | Notes |

|---|---|---|---|

| General chat | openai/gpt-4o | OpenAI | Fast, good all-rounder |

| Complex reasoning | anthropic/claude-opus-4.6 | Anthropic | Deep analysis, extended thinking |

| Coding | anthropic/claude-sonnet-4.6 | Either | Strong at code generation and review |

| Fast responses | google/gemini-3.1-flash-lite-preview | OpenAI | Low latency, cost-effective |

| Chinese language | qwen/qwen-max | OpenAI | Optimized for Chinese content |

| Lightweight tasks | anthropic/claude-haiku-4.5 | Anthropic | Fast and affordable |

| Embeddings (RAG) | openai/text-embedding-3-small | OpenAI | For knowledge base indexing |

Troubleshooting

Model list does not auto-load

Cherry Studio requires manually adding model names. See the Model Catalog for the complete list of model IDs.

Image analysis not available

Make sure you have selected a model that supports vision (e.g., openai/gpt-4o, google/gemini-3.1-flash-lite-preview). Text-only models will reject image inputs.

Connection test fails

- Check the API URL format. For OpenAI protocol:

https://api.ofox.ai/v1. For Anthropic protocol:https://api.ofox.ai/anthropic. Do not append extra path segments. - Verify your API key. Copy it again from your OfoxAI dashboard to rule out extra spaces or missing characters.

- Ensure at least one model is added. Cherry Studio may not complete a connection test without a model configured.

Extended thinking not available

Extended thinking is only supported through the Anthropic protocol. If you configured Claude models via the OpenAI-compatible endpoint, switch to the Anthropic provider (https://api.ofox.ai/anthropic) to access this feature.

Wrapping Up

Cherry Studio combined with a custom API provider gives you a single desktop interface for every major AI model. The setup takes about five minutes: add your API URL and key, manually enter the model IDs you want, and start chatting. The Anthropic protocol option adds native support for cache_control and extended thinking for heavy Claude users.

For the full configuration reference and model list, check the official OfoxAI Cherry Studio integration docs.