Claude 4 vs GPT-5 vs Gemini 3: How to Pick the Right AI Model for Every Task in 2026

TL;DR

There is no single best AI model in 2026. Claude 4 leads in instruction-following and long-form writing. GPT-5 is the fastest for structured output and tool use. Gemini 3 dominates multimodal tasks and offers the largest context windows. The winning strategy is to use all three — routing each request to the model that handles it best. This guide gives you a practical framework to do exactly that.

The Model Landscape Has Changed

A year ago, choosing an AI model was simpler. GPT-4 was the default. Claude was the “writing model.” Gemini was catching up.

In 2026, the gap between the top three providers has narrowed dramatically. Each model family has distinct strengths, but none dominates across all tasks. The developers getting the best results — and the best costs — are the ones mixing and matching models strategically.

This guide is the framework. No benchmarks divorced from reality. No hype. Just practical guidance on which model to use for what, based on real production workloads.

The Big Three: Where Each Model Wins

Claude 4 (Anthropic)

Flagship: Claude Opus 4.6 (1M context) Mid-tier: Claude Sonnet 4.6 Budget: Claude Haiku 4.5

What Claude does better than anyone:

-

Instruction-following. Give Claude a 2,000-word system prompt with 15 constraints, and it will follow all of them. GPT and Gemini tend to “forget” constraints in complex prompts. This matters enormously for production systems where consistent behavior is non-negotiable.

-

Long-form writing quality. Claude produces prose that reads like a human wrote it — varied sentence structure, natural transitions, appropriate tone shifts. Ask GPT to write a 3,000-word article and you’ll get competent but formulaic output. Claude’s writing has texture.

-

Large codebase reasoning. Claude Opus with its 1M token context window can hold an entire codebase in memory and reason about cross-file dependencies. Developers using Claude Code (Anthropic’s CLI tool) report it consistently outperforms alternatives at complex refactoring tasks.

-

Safety and refusal calibration. Claude is the least likely to hallucinate confidently. When it doesn’t know something, it tends to say so. For medical, legal, or financial applications where wrong answers carry real consequences, this matters.

Where Claude falls short:

- Slower response times on long generations compared to GPT-5.4

- Function calling / tool use works but feels less polished than GPT’s implementation

- Image generation not natively supported (text-only model)

GPT-5 (OpenAI)

Flagship: GPT-5.4 Pro Standard: GPT-5.4 Mid-tier: GPT-5.4 Mini Budget: GPT-5.4 Nano

What GPT does better than anyone:

-

Speed. GPT-5.4 consistently returns responses faster than Claude or Gemini at equivalent quality levels. For real-time applications — chatbots, autocomplete, inline suggestions — this speed advantage compounds into a significantly better user experience.

-

Structured output. When you need JSON, function calls, or tool use, GPT-5.4 is the most reliable. Its structured output mode rarely produces malformed responses. If you’re building an AI agent that needs to call APIs reliably, GPT is the safer choice.

-

Model tier depth. OpenAI’s lineup from Nano to Pro gives you more granularity for cost optimization. GPT-5.4 Nano at $0.20/M input tokens can handle classification and extraction tasks that would be absurdly expensive with a frontier model.

-

Ecosystem integration. More tools, libraries, and tutorials default to OpenAI’s API format. If you’re using LangChain, LlamaIndex, or most agent frameworks, GPT has first-class support everywhere.

Where GPT falls short:

- Tends to be verbose — GPT often adds unnecessary caveats and qualifications

- Instruction-following degrades on very complex, multi-constraint system prompts

- Writing quality is competent but often reads as “AI-generated” to experienced readers

Gemini 3 (Google)

Flagship: Gemini 3.1 Pro Fast: Gemini 3.1 Flash Budget: Gemini 3.1 Flash Lite

What Gemini does better than anyone:

-

Context window. Gemini 3.1 Pro handles over 1 million tokens natively. While Claude also supports 1M, Gemini’s performance at extreme context lengths — maintaining recall and coherence deep into long documents — is best in class.

-

Multimodal understanding. Gemini was built multimodal from the ground up. It processes images, video, and audio alongside text more naturally than competitors. For document understanding (PDFs with charts, screenshots of UIs, photos of whiteboards), Gemini consistently outperforms.

-

Image generation. Gemini 3.1 Flash Image Preview can generate and edit images directly within a text conversation. No separate API call to DALL-E needed. The quality has improved dramatically from the Gemini 2 era.

-

Price-to-performance ratio. Gemini 3.1 Flash Lite at $0.25/M input tokens delivers surprisingly strong performance on routine tasks. For high-volume applications, this pricing advantage adds up fast.

Where Gemini falls short:

- Instruction-following is less precise than Claude on complex prompts

- Writing style can feel more “encyclopedic” than conversational

- Occasionally hallucinates citations and references with high confidence

Task-by-Task: Which Model to Use

Theory is nice. Here’s what actually matters — which model to choose for specific tasks.

Coding

| Task | Best Model | Why |

|---|---|---|

| Large refactors (100+ files) | Claude Opus 4.6 | Best at maintaining consistency across a huge context |

| Rapid prototyping | GPT-5.4 | Fastest iteration speed, good at generating boilerplate |

| Bug fixing with full codebase context | Claude Opus 4.6 | Superior reasoning over long code contexts |

| Code review | Claude Sonnet 4.6 | Catches subtle issues, explains clearly |

| Generating tests | GPT-5.4 Mini | Good enough quality, much lower cost |

| Simple scripts and utilities | GPT-5.4 Nano | $0.20/M tokens is hard to beat for straightforward tasks |

The pattern: Claude for tasks requiring deep understanding, GPT for speed and volume, cheaper tiers for routine work.

Writing and Content

| Task | Best Model | Why |

|---|---|---|

| Long-form articles | Claude Opus 4.6 | Most natural prose, varied structure |

| Marketing copy | Claude Sonnet 4.6 | Good at matching brand voice and tone |

| Technical documentation | GPT-5.4 | Clear, structured, consistent formatting |

| Summarization | Gemini 3.1 Flash | Fast, accurate, handles long inputs well |

| Translation | GPT-5.4 or Gemini 3.1 Pro | Both strong; Gemini slightly better for non-Latin scripts |

| SEO content | Claude Sonnet 4.6 | Better at keyword integration without sounding forced |

The pattern: Claude for quality, GPT for structure, Gemini for volume processing.

Data and Analysis

| Task | Best Model | Why |

|---|---|---|

| Analyzing large documents (100K+ tokens) | Gemini 3.1 Pro | Best recall at extreme context lengths |

| Structured data extraction | GPT-5.4 | Most reliable JSON output |

| Sentiment analysis at scale | GPT-5.4 Nano | Cheap and accurate for classification |

| Complex reasoning chains | Claude Opus 4.6 | Most reliable at multi-step logic |

| Financial/legal document review | Claude Sonnet 4.6 | Lowest hallucination rate on factual claims |

| Research synthesis | Gemini 3.1 Pro | Can process the most source material in one pass |

Multimodal Tasks

| Task | Best Model | Why |

|---|---|---|

| Image understanding (charts, screenshots) | Gemini 3.1 Pro | Built multimodal from the ground up |

| Image generation | Gemini 3.1 Flash Image Preview | Best quality-to-speed ratio for generated images |

| PDF processing | Gemini 3.1 Pro | Handles mixed text/image layouts best |

| OCR and document digitization | Gemini 3.1 Flash | Fast and accurate on printed/handwritten text |

| Describing images for accessibility | Claude Sonnet 4.6 | Most detailed, natural descriptions |

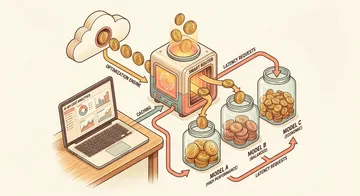

The Real Strategy: Model Routing

The most effective AI architecture in 2026 doesn’t use one model. It routes different requests to different models based on what the task actually needs.

Here’s a practical routing strategy:

Tier 1: Classification Layer ($0.10–0.75/M tokens)

Use GPT-5.4 Nano or Qwen 3.5 Flash to classify incoming requests. Is this a simple question? A complex reasoning task? A code generation request? A multimodal input?

This classification step costs almost nothing and dramatically reduces your overall spend by preventing frontier model calls on tasks that don’t need them.

Tier 2: Workhorse Models ($1–5/M tokens)

Route most production traffic to mid-tier models:

- Claude Sonnet 4.6 for writing, instruction-following, and code review

- GPT-5.4 Mini for structured output, function calling, and rapid responses

- Gemini 3.1 Flash for summarization, multimodal tasks, and high-volume processing

These models handle 80% of real-world tasks at a fraction of frontier pricing.

Tier 3: Frontier Models ($10–30/M tokens)

Reserve Claude Opus 4.6, GPT-5.4, or Gemini 3.1 Pro for tasks that genuinely need peak intelligence:

- Multi-step reasoning chains

- Novel code architecture decisions

- Complex creative writing

- Processing documents exceeding 200K tokens

The Cost Impact

A typical production app that routes naively — sending everything to a single frontier model — might spend $1,000/month on API calls. The same app with intelligent routing across tiers typically spends $300–400/month. Same quality on the tasks that matter, 60% lower cost overall.

Pricing Comparison (March 2026)

All prices per 1 million tokens.

Frontier Tier

| Model | Input | Output | Context | Best For |

|---|---|---|---|---|

| Claude Opus 4.6 | $15.00 | $75.00 | 1M | Deep reasoning, coding, writing |

| GPT-5.4 | $2.50 | $15.00 | 1M+ | General-purpose, fast |

| GPT-5.4 Pro | $30.00 | $60.00 | 1M+ | Maximum quality |

| Gemini 3.1 Pro | $2.00 | $12.00 | 1M+ | Multimodal, long context |

Mid Tier

| Model | Input | Output | Context | Best For |

|---|---|---|---|---|

| Claude Sonnet 4.6 | $3.00 | $15.00 | 200K | Writing, code review |

| GPT-5.4 Mini | $0.75 | $4.50 | 400K | Structured output, tool use |

| Gemini 3.1 Flash | ~$0.50 | ~$3.00 | 1M | Summarization, multimodal |

Budget Tier

| Model | Input | Output | Context | Best For |

|---|---|---|---|---|

| Claude Haiku 4.5 | $0.80 | $4.00 | 200K | Light tasks, classification |

| GPT-5.4 Nano | $0.20 | $1.25 | 400K | Classification, extraction |

| Gemini 3.1 Flash Lite | $0.25 | $1.50 | 1M | High-volume processing |

| Qwen 3.5 Flash | $0.10 | $0.40 | 1M | Lowest cost option |

Note: Prices reflect API provider list rates. Using an aggregation platform like ofox.ai can offer competitive pricing while letting you access all these models through a single API key.

The Multi-Model Implementation Problem

If model routing is the right strategy, why doesn’t everyone do it?

Because it’s painful to implement from scratch. Each provider has its own SDK, authentication, request format, and error handling. Managing three provider integrations is three times the code, three times the maintenance, and three API keys to manage.

This is where API aggregation platforms solve a real problem. Instead of integrating each provider separately, you call one endpoint with one key.

For example, with ofox.ai, you access Claude, GPT, Gemini, and dozens of other models through a unified OpenAI-compatible API. Your existing OpenAI SDK code works unchanged — you just swap the base URL:

client = OpenAI(

base_url="https://api.ofox.ai/v1",

api_key="your-ofox-key"

)Switch between models by changing a single string — gpt-5.4, claude-opus-4-6, gemini-3.1-pro — no SDK changes, no new authentication, no format translation.

Ofox also supports native Anthropic and Gemini protocols, so if you prefer those SDKs, you’re covered too.

A Decision Framework You Can Use Today

When a new task hits your AI pipeline, run through this checklist:

1. Does it involve images, audio, or video? → Gemini 3.1 (Pro for quality, Flash for speed)

2. Does it need to process more than 200K tokens of context? → Gemini 3.1 Pro or Claude Opus 4.6

3. Does it require precise instruction-following with many constraints? → Claude (Opus for complex, Sonnet for standard)

4. Does it need structured output (JSON, function calls, tool use)? → GPT-5.4 (standard for reliability, Mini for cost)

5. Is it a writing task where quality matters? → Claude Sonnet 4.6 or Opus 4.6

6. Is it high-volume with simple logic (classification, extraction, routing)? → GPT-5.4 Nano, Gemini Flash Lite, or Qwen 3.5 Flash

7. Does speed matter more than peak quality? → GPT-5.4 or Gemini 3.1 Flash

8. None of the above? Default to: → GPT-5.4 (best all-around for general tasks)

What Actually Matters in Production

Benchmarks tell you which model scores highest on standardized tests. Production tells you something different.

Latency matters more than you think. A model that’s 5% better on benchmarks but 200ms slower per request will feel worse to your users. For interactive applications, GPT-5.4’s speed advantage often outweighs Claude’s quality edge.

Consistency beats peak performance. A model that gives 8/10 responses every time is more valuable than one that alternates between 10/10 and 5/10. Claude’s instruction-following consistency is why production teams gravitate toward it for mission-critical pipelines.

Cost compounds silently. That frontier model you’re using for classification? It’s probably costing 50x what a budget model would charge for the same accuracy. Audit your model usage monthly. Most teams find that 60–70% of their API calls could run on a cheaper model with no quality impact.

Provider reliability is a real risk. Every major provider had outages in 2025. If your product goes down when your AI provider goes down, you need a fallback strategy. This is another reason multi-model architectures — and aggregation platforms that make them easy — aren’t just a cost play. They’re a reliability play.

Looking Ahead

The model landscape moves fast. GPT-5.4 launched in March 2026. Claude’s next major update is likely around the corner. Gemini 3.1 continues to improve.

But the strategic principles in this guide won’t change with the next model release:

- No single model is best at everything. This has been true for two years and will remain true.

- Match the model to the task. Use frontier models where they matter, budget models where they don’t.

- Minimize integration overhead. Use a unified API so switching models is a string change, not a codebase rewrite.

- Monitor and adapt. New models shift the landscape. Build your stack so you can swap in a better option without rearchitecting.

The teams that win with AI in 2026 aren’t the ones using the “best” model. They’re the ones using the right model for each task — and making that easy to change.

Ready to access Claude, GPT, Gemini, and 100+ more models through a single API? Try ofox.ai — one key, all models, OpenAI-compatible.