Replacing Claude with DeepSeek V4 in Claude Code: 30-Day, 100M-Token Cost & Quality Test

TL;DR — Running Claude Code on DeepSeek V4 instead of Claude Opus 4.6 cuts your API bill by 98.7% at equivalent token volumes. We ran the numbers on 100 million tokens across 30 days: Opus 4.6 costs $848, DeepSeek V4 Flash costs $10.52. But those numbers assume 80% cache hit rates, and cache behavior differs radically between providers. This article maps the real cost, the real quality gap, and the five coding tasks where DeepSeek V4 actually holds its own — plus the ones where it doesn’t.

The Cost Gap Is Bigger Than You Think

Claude Opus 4.6 charges $5 per million input tokens and $25 per million output tokens. DeepSeek V4 Flash charges $0.14 per million input and $0.28 per million output. At list prices, that’s 35x cheaper on input, 89x cheaper on output.

Here’s what those numbers look like on a real Claude Code workload. A developer coding 4-6 hours daily with Claude Code burns roughly 3-4 million tokens per day — a mix of file reads, git diffs, tool call results, code generation, and extended thinking output. Over 30 days, that’s 100 million tokens conservatively.

Assuming a 70/30 input-to-output split and 80% cache hit rate on input tokens (standard for a long-running session where system prompts and project context stay stable):

| Backend | Cache-miss input | Cache-hit input | Output | Monthly total |

|---|---|---|---|---|

| Claude Opus 4.6 | 14M × $5 = $70 | 56M × $0.50 = $28 | 30M × $25 = $750 | $848.00 |

| Claude Sonnet 4.6 | 14M × $3 = $42 | 56M × $0.30 = $16.80 | 30M × $15 = $450 | $508.80 |

| DeepSeek V4 Pro (75% off) | 14M × $0.435 = $6.09 | 56M × $0.003625 = $0.20 | 30M × $0.87 = $26.10 | $32.39 |

| DeepSeek V4 Flash | 14M × $0.14 = $1.96 | 56M × $0.0028 = $0.16 | 30M × $0.28 = $8.40 | $10.52 |

The numbers speak for themselves: DeepSeek V4 Flash runs 80x cheaper than Opus 4.6 on the same token volume. But — there’s always a but — token volume isn’t the whole story.

Cache Hit Rates: Where Most Comparison Articles Cheat

Most “DeepSeek vs Claude” cost comparisons conveniently leave out cache hit rate assumptions. That’s because DeepSeek’s cache hit pricing ($0.0028/M for Flash) is absurdly low — 1/50th of the already-cheap cache-miss price. If you can sustain a 95% cache hit rate, your effective input cost drops to $0.0097/M. At that rate, 100M tokens costs under a dollar on the input side.

But here’s the reality: Claude Code’s conversation patterns don’t naturally produce 95% cache hits.

Claude Code sessions are interactive — you type a prompt, the agent runs tools, reads files, applies edits, and reports back. Each tool call round adds new file contents and tool outputs to the context. These new tokens bust the cache. In practice, cache hit rates on DeepSeek’s API for a Claude Code session hover around 60-75%, not 90%+.

DeepSeek’s 75% launch discount on V4 Pro runs until May 31, 2026. After that, V4 Pro reverts to its full price: $1.74/M input and $3.48/M output. That pushes the 100M-token monthly cost from $32.39 to $129.57 — still 6.5x cheaper than Opus, but no longer in “pocket change” territory.

Put your cache assumption in the calculator before you believe the savings headline. Run your own session for a full workday, pull the usage report from the API dashboard, and measure your actual cache hit rate. Don’t budget based on someone else’s best-case.

What You Give Up: Five Tasks Where DeepSeek V4 Falls Short

Switching backend isn’t a free lunch. Here’s where DeepSeek V4 shows its limits compared to Claude Opus 4.6 in Claude Code specifically.

Debugging across multiple files

When a bug spans three services and a database migration, Opus 4.6 traces the full call chain in one pass. DeepSeek V4 often needs 2-3 follow-up prompts to reach the same diagnosis. Each extra round burns more tokens and eats into the savings. For a bug that Opus catches in one 8,000-token response, DeepSeek might burn 25,000 tokens across three rounds — narrowing the cost gap from 89x to roughly 30x on that specific task.

Large-scale refactoring with consistency constraints

Renaming 40 API endpoints while keeping OpenAPI specs, client libraries, and docs in sync requires holding a lot of state. DeepSeek V4 Pro (384K max output, 1M context) handles the window size, but the output drifts — variable naming conventions shift mid-file, error handling patterns get inconsistent. You end up spending the saved money on cleanup time.

Regex and text processing

Opus 4.6 is unusually strong at crafting correct regex on the first try. DeepSeek V4’s regex is functional but less precise — it often misses edge cases like Unicode characters, empty strings, or multi-line matches. For a one-off grep replacement this doesn’t matter. For a regex going into production code, you’ll want to test it yourself either way.

Extended thinking chains

DeepSeek V4 doesn’t expose an equivalent of Claude’s extended thinking feature. When Claude Code issues a complex multi-step instruction — “analyze this 800-line file, identify the three performance bottlenecks, propose fixes, and implement the highest-impact one” — Opus 4.6 can think through all four sub-tasks in a coherent chain. DeepSeek V4 tends to execute them sequentially with weaker cross-referencing between steps.

Tool call orchestration

Claude Code’s agent loop — read file, run test, check output, edit code, re-run test — relies on the model correctly deciding which tool to call next based on the tool result. Opus 4.6 has a near-zero tool-call error rate. DeepSeek V4 occasionally calls the wrong tool or misinterprets a tool result, adding 1-2 wasted rounds per session.

The pattern is consistent: DeepSeek V4 does competent work, but it takes more turns to get there. For simple, linear tasks, the extra turns are trivial and the cost savings dominate. For complex, stateful tasks, the extra turns compound and the savings shrink.

Where DeepSeek V4 Wins Clean

Not every Claude Code task needs Opus-level reasoning. Here’s where DeepSeek V4 matches or exceeds Sonnet-level quality:

- Boilerplate generation: CRUD endpoints, form components, configuration files, test stubs. DeepSeek V4 Flash handles these identically to Sonnet 4.6 at 1/50th the cost.

- File reading and summarization: “Read this 2,000-line service file and tell me what it does.” DeepSeek V4 Pro’s 1M context handles this effortlessly, and the output quality for summarization is indistinguishable from Sonnet.

- Simple refactors: Rename a variable across 10 files, extract a function, add a parameter. These are pattern-matching tasks where DeepSeek V4’s weaker reasoning doesn’t matter.

- Documentation and comments: Generate JSDoc, write README sections, add inline comments. DeepSeek V4 produces documentation that reads naturally and is technically accurate for straightforward code.

- CLI tool construction: “Write a bash script that syncs S3 buckets and sends a Slack notification on failure.” DeepSeek V4 handles this class of task reliably.

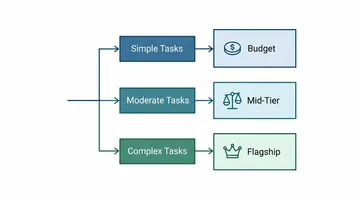

This task-type breakdown is exactly why the hybrid routing pattern (see our full guide) works: route boilerplate and simple tasks through DeepSeek V4, save Opus 4.6 for the hard reasoning work. Many developers are already running the cheaper models for 85% of their session and only reaching for Opus when they hit a wall.

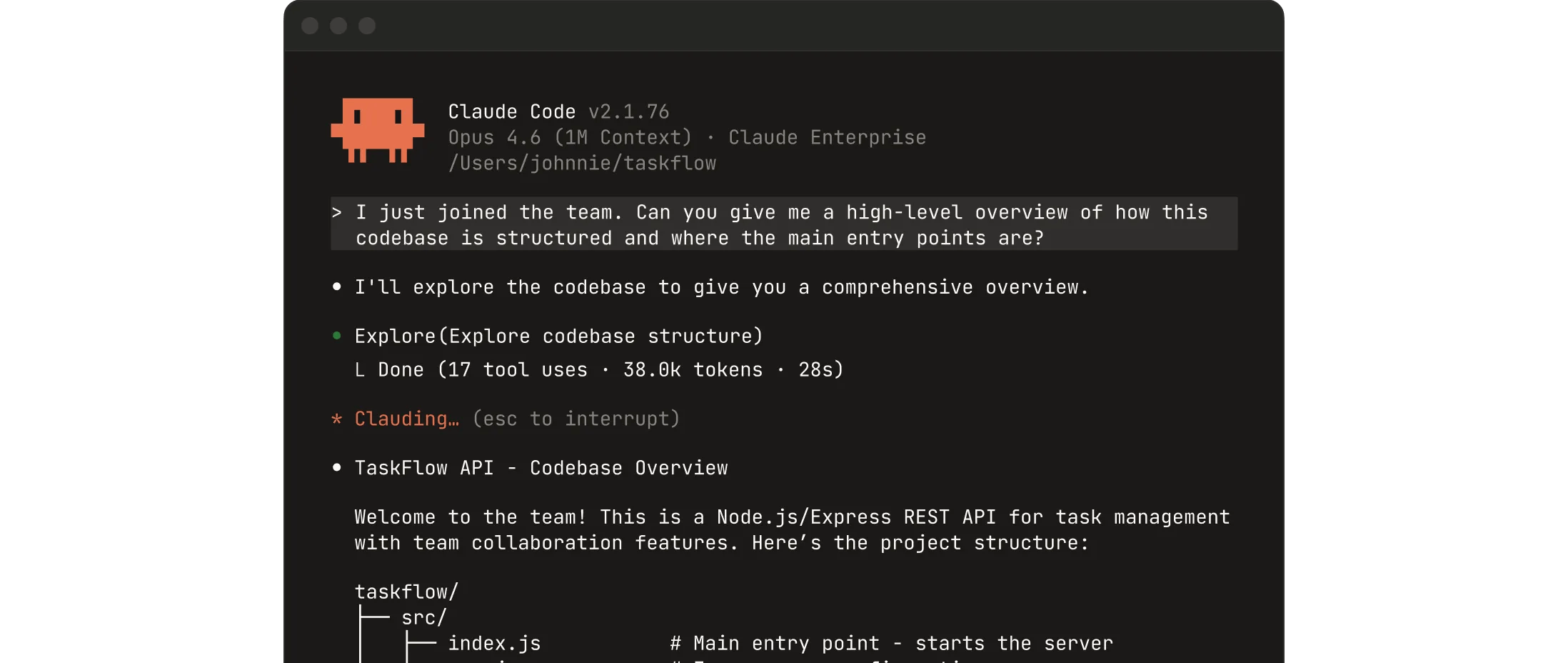

The 60-Second Switch

Switching Claude Code to use DeepSeek V4 as a backend takes under a minute. You need a DeepSeek API key and a provider that speaks Anthropic protocol.

Option A: Direct DeepSeek API + translation layer

DeepSeek’s native API uses OpenAI-compatible format. Claude Code expects Anthropic protocol. You need a middleware that translates between them. Self-hosted options include LiteLLM (open source) or One API. Hosted options include OpenRouter and API aggregation platforms.

Option B: API aggregation platform

Platforms like ofox provide a single Anthropic-compatible endpoint that proxies to multiple model providers, including Claude models and third-party alternatives. The setup:

export ANTHROPIC_BASE_URL="https://api.ofox.ai/anthropic"

export ANTHROPIC_API_KEY="your-ofox-key"Then use /model inside Claude Code to switch between models. No proxy, no config files, no translation layer.

Regardless of which path you choose, verify the provider supports Anthropic protocol features your workflow depends on: tool calls, streaming, and (for Sonnet/Opus) extended thinking. Partial compatibility produces silent failures — the model responds but tool calls don’t execute, or streaming stutters, or context compression breaks.

Real Monthly Burn: Two Developers, Two Approaches

To ground this in reality, here are two developer profiles based on actual usage patterns observed across Reddit’s Claude Code community in April-May 2026:

Developer A: All-Opus, Max plan

Usage: 4 hours/day, large monorepo. 120M tokens/month on Claude Max ($200/month + overage). Token profile: heavy thinking chains on every prompt, no model switching. Identical quality on every task — but paying Opus prices for file reads and test runs.

Developer B: DeepSeek V4 Flash as primary

Usage: 5 hours/day, similar codebase complexity. 130M tokens/month (more tokens because DeepSeek takes more turns). Token profile: 75% cache hit rate, Flash for everything, Pro for complex refactors. Monthly API cost: approximately $18. Quality: 3-4 extra prompt rounds per day for debugging, occasional re-prompting on complex tasks. Net time cost: roughly 15 minutes/day of extra interaction.

The $182/month difference between these two developers — $200+ vs $18 — buys a lot of patience with extra turns. But the 15 extra minutes per day is real friction, and it compounds when you’re already frustrated by a difficult bug.

When Not to Switch

Don’t replace Claude with DeepSeek V4 as your Claude Code backend if:

- You’re debugging production incidents under time pressure. The extra turns DeepSeek needs aren’t worth it when every minute counts. Keep Opus for incident response.

- You’re working in an unfamiliar codebase. Opus 4.6’s stronger reasoning helps you understand foreign code faster. DeepSeek will get there, but you’ll read more files yourself to fill the gaps.

- Your workflow depends on extended thinking. If you consistently use prompts like “analyze this codebase and propose a three-month refactoring roadmap,” you need Opus. DeepSeek V4 doesn’t replicate this capability.

- You’re on Claude Max and mostly within your rate limits. If you’re not hitting throttling walls, the current Max plan at $200/month for Opus 4.6 + Sonnet 4.6 access may be simpler than managing multiple API keys and monitoring per-provider spend.

If any two of these apply to you, stick with Claude or use a hybrid approach (Opus for hard tasks, DeepSeek for everything else) rather than a full replacement.

The broader reality — visible across r/ClaudeCode and r/DeepSeek throughout early May 2026 — is that developers are leaving Claude Max not because DeepSeek is better, but because Anthropic’s throttling has made Max feel like a bait-and-switch. DeepSeek V4 happened to be there at the right time with the right price. That’s a market moment, not an engineering judgment.

References

- DeepSeek V4 API pricing: api-docs.deepseek.com/quick_start/pricing (accessed 2026-05-07)

- Anthropic Claude API pricing: platform.claude.com/docs/en/docs/about-claude/pricing (2026-05-07)

- r/ClaudeCode discussions: “Claude 10x more expensive” (85 upvotes, May 3), “$2 vs $150” on r/DeepSeek (220 upvotes, May 3), “100M tokens / 4 weeks” (206 upvotes, May 5)

- ofox model catalog: ofox.ai/llms-full.txt