Sora 2 Video API: Developer Guide — Access, Pricing & Code Examples

Important: OpenAI has announced that the Sora 2 API and consumer applications will be discontinued on September 24, 2026. The iOS and Android apps were already shut down on April 26, 2026. If you’re building production systems that depend on video generation, factor this timeline into your architecture decisions — you’ll need to migrate to an alternative or wait for OpenAI’s next video generation offering well before Q4 2026.

TL;DR: Sora 2 is OpenAI’s production video generation API, accessible through POST /v1/videos at api.openai.com. Video generation is asynchronous — you submit a job, poll for status, and download the result once complete. This guide covers authentication, working code examples in curl and Python, and what to actually expect from the model in production. If you’ve been putting off video generation because the API landscape looked fragmented, Sora 2’s straightforward REST interface makes it one of the easier video models to integrate — just be aware of the September 2026 sunset date.

What Sora 2 Actually Is

Sora 2 is OpenAI’s second-generation diffusion transformer model, built for text-to-video generation. Announced in September 2025, it’s the version developers access through the API — higher fidelity, better prompt adherence, and designed for programmatic use rather than the consumer app experience.

Under the hood, it’s a denoising latent diffusion model with a transformer denoiser. In practical terms: you send a text prompt and get video frames back. The model handles longer prompts with granular creative control — scene composition, camera movement direction, lighting mood — things earlier video models either ignored or interpreted loosely.

The model stamps every output with C2PA metadata and a visible moving watermark, which matters if you’re building anything user-facing where provenance needs to be traceable.

Getting Started

You need an OpenAI API key with access to video generation. Grab one at platform.openai.com/api-keys.

# Your environment

OPENAI_API_KEY="sk-your-key-here"

OPENAI_BASE_URL="https://api.openai.com/v1"Check available models to confirm Sora 2 is active on your account:

curl -s https://api.openai.com/v1/models \

-H "Authorization: Bearer $OPENAI_API_KEY" | grep -i soraThe model ID is sora-2. Always resolve dynamically via /v1/models rather than hardcoding, as model identifiers can shift between platform updates.

Generating Video: How the API Works

Sora 2 video generation uses a dedicated endpoint — POST /v1/videos — separate from chat completions and image generation. It’s asynchronous: the initial response returns a job ID and status, not a finished video file.

Step 1 — Confirm the model is active on your account:

curl -s https://api.openai.com/v1/models \

-H "Authorization: Bearer $OPENAI_API_KEY" | grep -i soraStep 2 — Submit a video generation job. The endpoint is POST /v1/videos with a multipart form body:

curl https://api.openai.com/v1/videos \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-F "model=sora-2" \

-F "prompt=Aerial drone shot of a coastal village at golden hour, slow pan right" \

-F "size=1280x720" \

-F "seconds=6"The response is a job object — not a video file:

{

"id": "video_abc123",

"object": "video",

"model": "sora-2",

"status": "queued",

"progress": 0,

"created_at": 1712698600,

"size": "1280x720",

"seconds": "6"

}Step 3 — Poll for completion. Video generation takes 30 seconds to several minutes. Retrieve the job status until it reaches completed:

curl https://api.openai.com/v1/videos/video_abc123 \

-H "Authorization: Bearer $OPENAI_API_KEY"import time

from openai import OpenAI

client = OpenAI(api_key="sk-your-key")

# Submit the generation job

job = client.post("/videos", body={

"model": "sora-2",

"prompt": "Aerial drone shot of a coastal village at golden hour, slow pan right",

"size": "1280x720",

"seconds": "6"

})

# Poll until complete

while job["status"] not in ("completed", "failed"):

time.sleep(5)

job = client.get(f"/videos/{job['id']}")

if job["status"] == "completed":

print(f"Video ready, expires at {job['expires_at']}")

else:

print(f"Generation failed: {job.get('error', {}).get('message')}")The same polling pattern works in Node.js with fetch or openai SDK — submit the job, then loop on status checks with a reasonable interval.

Two things to know before going to production. First, generated video URLs expire — download and store outputs in your own bucket (S3, R2, etc.) rather than relying on the temporary URL. Second, generation cost scales with duration and resolution. A 6-second clip at 1080p costs substantially more than a 4-second clip at 720p. Start with the shortest viable output and iterate up once the pipeline works.

What Sora 2 Excels At

Cinematic establishing shots. Drone flyovers, city time-lapses, nature scenes — the diffusion transformer handles smooth camera motion better than most competitors. If your project needs B-roll that doesn’t look like a slideshow, this is the sweet spot.

Prompt-faithful scene composition. Describe “a red bicycle leaning against a stone wall, ivy covering the left third of frame, overcast lighting” and you get exactly that layout. Sora 2’s prompt adherence is noticeably tighter than first-generation video models.

Multi-shot sequences with consistent style. You can chain generations while maintaining visual continuity — same lighting temperature, same depth of field, same color grade. This matters for anything beyond one-off clips.

Additional operations beyond generation. The API supports remixing existing videos (POST /v1/videos/{id}/remix), extending completed clips (POST /v1/videos/extensions), and editing source footage (POST /v1/videos/edits). These go beyond simple text-to-video and unlock iterative workflows.

Where It Falls Short

Independent benchmarks from Artificial Analysis place Sora 2 below several competitors in overall output quality, with models like Seedance, Runway, and Kling scoring higher on aggregate metrics. The video generation space is intensely competitive and no single model leads across every dimension.

Fast motion and complex action sequences can still produce flickering artifacts. If your use case is sports highlights or fast-paced action, test thoroughly before committing. The watermark is also non-negotiable — there’s no way to generate clean output without it, which may disqualify certain creative applications.

Practical Use Cases

Social media content pipelines. Generate short-form video clips from product descriptions or blog posts programmatically. Hook up a CMS webhook to the Sora 2 API and auto-generate 5-second product showcase clips.

Pre-visualization for video production. Storyboard a scene in text, generate reference footage, and hand it to a video editor as a starting point. Faster than sketches and more concrete than mood boards.

E-learning and documentation. Turn step-by-step instructions into short visual demonstrations. A 10-second clip of a UI interaction beats three paragraphs of description — and costs roughly the same to generate.

A/B testing creative variants. Generate multiple versions of the same product video with different lighting, angles, or pacing, then test which drives higher engagement. The API makes iteration cheap enough to actually do this.

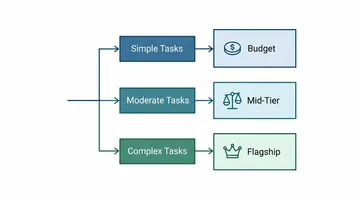

Pricing

OpenAI bills Sora 2 video generation per request based on duration and resolution. Exact rates are on openai.com/pricing. As a rough benchmark (verify current rates before budgeting): video generation typically runs 10-50x the cost of an equivalent-length text completion, with higher resolutions and longer durations at the upper end of that range.

There is no free tier for video generation — each job incurs a cost. Start with short, low-resolution test clips before scaling up.

Production Checklist

Before shipping Sora 2 into production, handle these:

- Async polling or webhooks. Video generation takes 30 seconds to several minutes. Don’t block your request loop. Poll with exponential backoff or set up a webhook if OpenAI supports one for your integration tier.

- Timeout and retry. Set generous timeouts (5+ minutes) and exponential backoff for generation status checks.

- Resolution gating. Allow users to request higher quality, but default to 720p to keep costs predictable.

- Content moderation layer. Text-to-video prompts are user-generated content. Filter inputs before they hit the API.

- Output storage. Generated video URLs are temporary and expire. Download and store outputs in your own bucket (S3, R2, etc.) immediately on completion.

- Migration plan. The Sora 2 API sunsets September 24, 2026. Architect your system so the video generation layer can be swapped to an alternative provider without rewriting the rest of your pipeline.

This is still early days for programmatic video generation — the models are improving every quarter and the APIs are stabilizing. Just be clear-eyed about the timeline: Sora 2 has an expiration date, and any production system built on it needs a migration path.