How to Switch Claude Code Backend in 2026: DeepSeek, OpenAI, OpenRouter, or Custom API in 60 Seconds

TL;DR — Claude Code is not locked to Anthropic’s billing. By changing two environment variables (ANTHROPIC_BASE_URL and ANTHROPIC_API_KEY), you can route it through any Anthropic-compatible API provider — ofox, OpenRouter, or your own proxy — and access models from DeepSeek, OpenAI, Google, and Kimi at a fraction of Anthropic’s direct pricing. This guide covers the exact config for four backends, the one-liner shell aliases that make switching instant, and the three pitfalls that silently break tool calls and extended thinking.

Why Switch Claude Code’s Backend

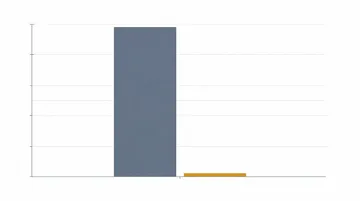

Claude Code bills through Anthropic’s API by default. At $15 per million input tokens and $75 per million output tokens for Claude Opus 4.6, a heavy coding day can burn through $20-40. The billing happens per-token with no monthly cap — unlike the $200 Max plan which comes with aggressive throttling. On May 5, 2026, a post on r/ClaudeCode hit 343 upvotes with the title “Anthropic is scamming Max users 20x” — users reported being throttled to near-uselessness within hours of their billing cycle resetting. Meanwhile, another developer on r/DeepSeek (206 upvotes, May 5) documented burning through 100 million tokens in 4 weeks for under $30 by routing through DeepSeek. A bill that would have been $1,500+ on Anthropic direct.

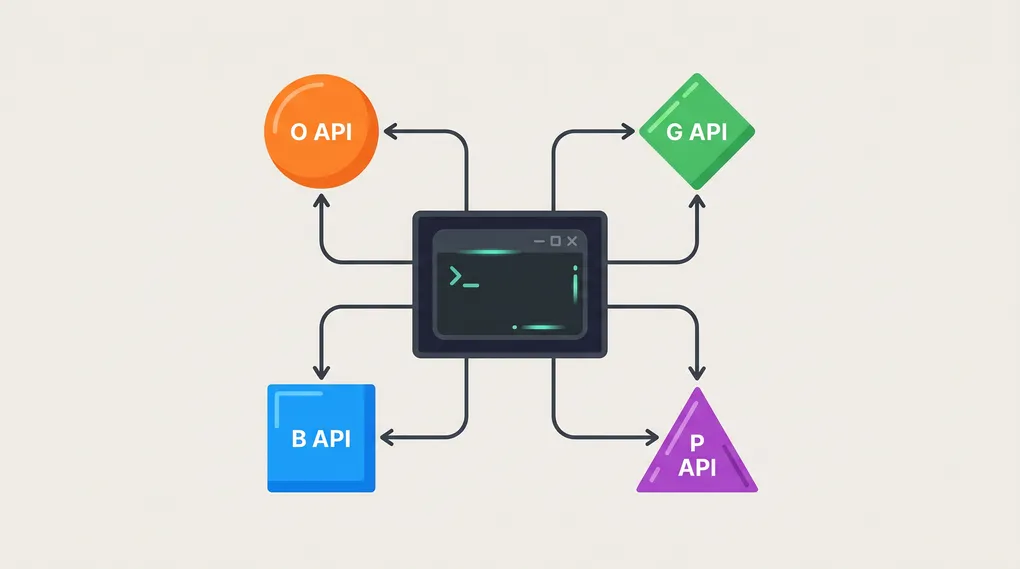

How Backend Switching Works

Claude Code reads two environment variables to determine where its API requests go: ANTHROPIC_BASE_URL sets the endpoint, and ANTHROPIC_API_KEY authenticates you. That’s it. There is no config file to edit, no SDK to swap out.

export ANTHROPIC_BASE_URL="https://api.ofox.ai/anthropic"

export ANTHROPIC_API_KEY="sk-your-key-here"

claudeRun /status inside Claude Code to confirm the switch took effect. The output shows your current base URL, active model, and whether features like extended thinking are available.

For persistent configuration across sessions, add these exports to your shell profile (~/.zshrc or ~/.bashrc), or use CC Switch — a free open-source GUI that manages multiple provider profiles and writes to ~/.claude/settings.json automatically. A May 4 post on r/ClaudeCode (83 upvotes) titled “Use Kimi and OpenAI subscriptions with cc switch” captured the community’s shift toward multi-provider setups, and another on May 5 (64 upvotes, “DeepClaude — transparent proxy for Claude Code”) showed the demand for simpler switching tools.

Backend-by-Backend Setup

DeepSeek

DeepSeek’s native API (api.deepseek.com) speaks OpenAI format, not Anthropic. Claude Code cannot call it directly. The practical path is through a gateway:

Via ofox: Set ANTHROPIC_BASE_URL to https://api.ofox.ai/anthropic, then use /model inside Claude Code and select deepseek/deepseek-v3.2 (the latest chat model) or deepseek/deepseek-r1 (the reasoning specialist). Both models cost a fraction of Claude Opus — DeepSeek V3.2 runs at approximately $0.27/M input and $1.10/M output on ofox, roughly 50x cheaper than Opus 4.6 at retail Anthropic pricing.

Via OpenRouter: Same pattern — set ANTHROPIC_BASE_URL to https://openrouter.ai/api/v1 and select deepseek/deepseek-chat from the model picker. OpenRouter adds a 5.5% fee on credit purchases.

What works: Code generation, boilerplate, syntax fixes, docstring generation, simple refactors. DeepSeek handles these at near-Claude quality for a tiny fraction of the cost.

What doesn’t: Extended thinking and cache_control are not available on DeepSeek models regardless of gateway. Multi-file architectural reasoning can drift — for these tasks, switch back to a Claude model.

ofox

ofox provides a native Anthropic-compatible endpoint at https://api.ofox.ai/anthropic with full protocol support — cache_control, extended thinking, tool streaming, and fine-grained streaming all work identically to Anthropic direct.

export ANTHROPIC_BASE_URL="https://api.ofox.ai/anthropic"

export ANTHROPIC_API_KEY="sk-your-ofox-key"Once connected, /model shows the full catalog: claude-opus-4.6, claude-sonnet-4.6, claude-haiku-4 from Anthropic; gpt-5.4, gpt-5.4-pro from OpenAI; gemini-3.1-pro, gemini-3.1-flash from Google; deepseek-v3.2, deepseek-r1 from DeepSeek; kimi-k2 from Moonshot; and qwen3-max from Alibaba. One API key, one endpoint, every model.

The practical workflow: keep claude-sonnet-4.6 as your default for daily coding (fast, reliable, handles 80% of tasks), switch to deepseek-v3.2 for bulk operations like file reads and boilerplate, and reserve claude-opus-4.6 for complex architecture decisions.

ofox does not charge per-model subscription fees — you pay per token at rates typically 20-70% below direct provider pricing.

OpenAI

OpenAI’s API (api.openai.com) uses its own protocol, so Claude Code cannot call it natively — same constraint as DeepSeek. The workable approaches:

Via ofox (simplest): With ofox as your backend, select gpt-5.4 or gpt-5.4-pro from the /model picker. GPT-5.4 is OpenAI’s current flagship and handles coding tasks competently, though it lacks the tool-use precision that Claude models have in Claude Code’s agent loop.

Via OpenRouter: Same gateway pattern — configure OpenRouter as the backend, select openai/gpt-5.4 from the model list.

Via LiteLLM (self-hosted): Run a local LiteLLM proxy that translates Anthropic-format requests to OpenAI-format:

litellm --model openai/gpt-5.4 --port 4000

export ANTHROPIC_BASE_URL="http://localhost:4000"The self-hosted approach eliminates gateway markup but requires maintaining a local server and managing your own OpenAI API key.

OpenRouter

OpenRouter is the largest independent model router, with an Anthropic-compatible endpoint at https://openrouter.ai/api/v1. It supports 300+ models across every major provider.

export ANTHROPIC_BASE_URL="https://openrouter.ai/api/v1"

export ANTHROPIC_API_KEY="sk-or-v1-your-openrouter-key"Key differences from direct Anthropic:

- Credit system: You pre-purchase credits; a 5.5% fee applies on top of the purchase amount

- Latency overhead: Expect 100-150ms additional round-trip time compared to direct provider connections

- Protocol coverage: OpenRouter supports the core Anthropic Messages API but some features (

cache_controlwrite tokens, fine-grained tool streaming) may behave differently depending on the underlying model

OpenRouter is strongest when you need access to models from providers you don’t have direct API keys for. For pure cost optimization on Claude models, dedicated gateways with lower overhead (like ofox) usually come out cheaper. See our OpenRouter alternatives comparison for a detailed cost breakdown.

Picking the Right Model Per Task

Not every token needs Opus. Here’s a decision framework based on what thousands of Claude Code users on Reddit have converged on:

| Task Type | Recommended Model | Why |

|---|---|---|

| File reads, git status, grep | Claude Haiku 4 or DeepSeek V3.2 | Sub-second latency, negligible cost |

| Boilerplate, scaffolding, CRUD | DeepSeek V3.2 or Kimi K2 | Fraction of Claude pricing, identical output |

| Bug fixes, simple refactors | Claude Sonnet 4.6 | Fast, reliable, rarely needs correction |

| Architecture, cross-file changes | Claude Opus 4.6 | Only model that consistently handles multi-step reasoning without drift |

| Security review, performance audit | Claude Opus 4.6 | Cannot afford false negatives |

The key insight from the hybrid routing pattern that Reddit popularized: roughly 40% of tokens go to file operations, 25% to boilerplate, 20% to simple edits, and only 15% genuinely require Opus-level reasoning. Route the first 85% through cheap models and you keep Opus quality where it matters while cutting your total bill by 80%. For a deeper cost analysis of running DeepSeek inside Claude Code, see our 30-day, 100M-token test.

Pitfalls That Break Your Setup

1. The Protocol Mismatch Trap

Not every provider that says “Claude Code compatible” actually speaks the Anthropic Messages API. If you set ANTHROPIC_BASE_URL to an OpenAI-format endpoint (like https://api.deepseek.com/v1), Claude Code will send Anthropic-format requests and get back 400 errors or garbled responses. Always verify the endpoint explicitly supports the /v1/messages path with Anthropic-format request bodies.

2. The Tool Call Black Hole

Claude Code’s agent loop depends on tool calls — reading files, running bash commands, searching code. Some gateway setups silently drop tool call responses. The symptom: Claude Code appears to work but “forgets” file contents or fails to execute commands. Run /status and confirm tool calling is listed as available. If a provider doesn’t support tool streaming, set CLAUDE_CODE_ENABLE_FINE_GRAINED_TOOL_STREAMING=0 to fall back to non-streaming mode.

3. The Silent Extended Thinking Drop

Claude Code uses extended thinking (thinking.budget_tokens) for complex tasks. Some proxies accept the parameter but ignore it — Claude Code thinks it’s getting extended reasoning while actually receiving standard responses. Verify with /status that extended thinking shows as enabled, and test with a known hard problem: if the response quality drops noticeably, extended thinking isn’t actually running.

4. The Model Name Mismatch

Gateway model IDs don’t always match what Claude Code expects. deepseek-chat on OpenRouter might need to be mapped as deepseek/deepseek-chat in Claude Code. If /model doesn’t show your expected model, use ANTHROPIC_CUSTOM_MODEL_OPTION to add it:

export ANTHROPIC_CUSTOM_MODEL_OPTION="deepseek/deepseek-chat"

export ANTHROPIC_CUSTOM_MODEL_OPTION_NAME="DeepSeek V3.2"The One-Liner Switching Pattern

Once you’ve configured each provider, create shell aliases for instant switching:

alias cc-ofox='export ANTHROPIC_BASE_URL="https://api.ofox.ai/anthropic" && export ANTHROPIC_API_KEY="$OFOX_API_KEY"'

alias cc-openrouter='export ANTHROPIC_BASE_URL="https://openrouter.ai/api/v1" && export ANTHROPIC_API_KEY="$OPENROUTER_API_KEY"'Type cc-ofox && claude and you’re in. Each alias takes under a second to execute. Keep your API keys in environment variables (not hardcoded in aliases), and use a password manager or ~/.secrets file sourced by your shell profile.

For a GUI option, CC Switch (brew install --cask cc-switch) provides a visual provider manager with one-click activation and automatic settings.json persistence. It’s the same tool covered in our Claude Code custom API setup guide.

The bottom line: Claude Code’s backend is a configuration choice, not a vendor lock-in. Two environment variables separate you from cutting your monthly AI coding bill by 80% while keeping the same terminal workflow. Pick a provider, set the URL and key, and you’re routing — no migration, no retraining, no workflow change.