Claude Code Token Optimization 2026: 5 Strategies That Cut Your API Bill by 60-90%

TL;DR: Claude Code can burn through a four-figure API bill over a weekend if you let it stream raw file contents and reload the same system prompt every turn. Five techniques actually shift the cost curve in production: aggressive prompt caching with the right TTL, disciplined use of /compact and /clear, Sonnet-default routing with selective Opus escalation, isolated subagents for high-context work, and trimming MCP tool bloat. Teams running all five report 60–90% reductions on real workloads.

The bill isn’t an Anthropic problem. It’s a workflow problem. Claude Code happily re-reads your entire repo on every turn unless you tell it not to.

Why your Claude Code bill is bigger than your Cursor bill

Cursor charges a flat $20. Claude Code charges per token, and a hot session with three MCP servers loaded, no prompt caching, and Opus on default routing will incinerate $100 of credits before lunch. The fix isn’t a different agent. The fix is understanding what Claude Code actually sends on the wire.

Every turn ships:

- The system prompt (Claude Code’s internal instructions, large and stable, perfect cache candidate)

- The full conversation history so far

- Every MCP tool’s schema (whether or not you’ll use it this turn)

- File contents you read with the

Readtool (re-sent on every subsequent turn) - Your actual message

Points 1, 3, and 4 are the ones bleeding you. The five strategies below kill each leak in turn.

Strategy 1: Prompt caching, pay 10% for everything you re-read

Cache hits cost 0.1× standard input on Anthropic’s published pricing, a flat 90% discount on cached tokens. The catch is that cache writes cost more: 1.25× standard input for the 5-minute TTL, or 2× for the 1-hour TTL. That math has a hard implication: 5-minute caching pays off after a single hit; 1-hour caching needs at least two hits to break even.

For Claude Sonnet 4.6 (input $3/M), a cache read is $0.30/M instead of $3/M. For Claude Opus 4.7 (input $5/M on ofox.ai), a cache read is $0.50/M instead of $5/M. On a 50,000-token system prompt that you hit 40 times in a session, you’ve saved roughly $5.94 on Sonnet, and that’s just one cached block.

What to cache:

- System prompts and CLAUDE.md content: large, stable, hit every turn

- Tool definitions: same payload turn after turn

- Reference documents you’ll re-query: schemas, design docs, the codebase tree

- Few-shot examples: perfect static payload

How to enable it in raw SDK calls:

client.messages.create(

model="anthropic/claude-sonnet-4.6",

system=[

{

"type": "text",

"text": large_system_prompt,

"cache_control": {"type": "ephemeral"} # default 5m TTL

}

],

messages=conversation

)For the 1-hour variant, only switch when your telemetry justifies it:

"cache_control": {"type": "ephemeral", "ttl": "1h"}The March 2026 cache regression: check your bills

If your Claude Code bill jumped 20–30% in early March without anything in your workflow changing, you weren’t imagining it. Anthropic silently rolled back the default 1-hour TTL that Claude Code had been using since February, reverting to 5-minute. Long async sessions that previously reused a single cache write started writing the cache every five minutes instead, inflating cache creation costs by a measured 20–32%.

The fix on the user side: explicitly request 1h TTL in your config if your workload pattern justifies it, and monitor cache hit ratio through the API response’s cache_creation_input_tokens and cache_read_input_tokens fields. If reads outnumber writes by more than 2:1, you’re winning. If not, your cache strategy is upside down.

Strategy 2: /compact early, /clear often

Claude Sonnet 4.6 and Opus 4.7 now ship with a 1M token context window at standard pricing (generally available since March 13, 2026 — no beta header, no premium tier required). That sounds like room to breathe, but the naive failure mode is the same as before: letting a session drift toward the auto-compact trigger and getting a bad summary because the context is already noisy.

/compact summarizes the conversation and replaces the raw history with that summary. The earlier you trigger it, the better the summary, because Claude has more headroom to retain what matters and drop what doesn’t. A reasonable trigger threshold is ~60% context utilization, not 90%. Many engineers also pass custom hints: /compact Focus on code samples and API usage.

/clear wipes everything. No summary, no carryover. Use it when you switch to genuinely unrelated work. Finishing a frontend bug and starting on a migration script is a /clear moment, not a /compact moment. Carrying stale context into unrelated work means you’re paying input tokens on irrelevant history forever.

A working discipline:

- Same feature, ongoing thread → keep going

- New ticket, same area of the codebase →

/compactfirst, narrate a one-line preface - New domain entirely →

/clear

KDnuggets’ 7 practical ways to reduce Claude Code token usage places proactive compaction in the top three levers — the qualitative point is that compacting early, while the conversation is still clean, produces a usable summary; compacting at 90%+ produces noise. Anthropic’s own cost guide recommends the same pattern.

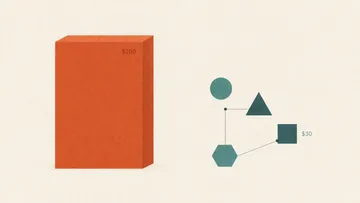

Strategy 3: Default Sonnet 4.6, escalate to Opus 4.7 only when stuck

The single highest-leverage configuration change in Claude Code is /model claude-sonnet-4.6. Sonnet 4.6 is genuinely strong on coding tasks; Anthropic’s own benchmarks have it close to Opus on SWE-bench while running at 60% of the input price and 60% of the output price.

On ofox.ai pricing:

| Model | Input ($/M) | Output ($/M) |

|---|---|---|

| Claude Sonnet 4.6 | $3 | $15 |

| Claude Opus 4.6 | $5 | $25 |

| Claude Opus 4.7 | $5 | $25 |

When to actually reach for Opus 4.7:

- Cross-file architectural decisions where you need consistency across many edits

- Hard debugging where Sonnet has already failed once on the same problem

- Schema or API contract design where mistakes propagate to downstream consumers

- Migration logic where edge cases matter and Sonnet has been hallucinating column names

When Sonnet is the correct default:

- Edits to a known file

- Test writing

- Refactors with clear scope

- Code review and explanation

- Most agentic loops (file reads, greps, simple edits)

A practical pattern is the “Sonnet does the legwork, Opus does the thinking” split: Sonnet handles the actual file operations, and you only /model opus for design discussions and stuck moments. See our Claude Code hybrid routing pattern for the configuration details. Our Claude Opus 4.7 API review covers when 4.7’s improvements over 4.6 are worth paying for and when they aren’t.

Strategy 4: Isolate verbose work in subagents

A subagent is a Claude instance that runs in its own context window. The parent invokes it with a task, the subagent burns through its own context doing the work, and only a summary returns to the parent. Net result: 50K tokens of grep output never poisons your main thread.

Where subagents pay off:

- Codebase exploration: “find all callers of

processPaymentand summarize their patterns” - Large file reads: reading a 10K-line vendored library to extract one API surface

- Research tasks: web search results, doc dumps, long log analysis

- Test failure investigation: parsing 50KB of pytest output

Where subagents waste tokens:

- Trivial shell commands (the spawn overhead is more than the task)

- Single-file edits the parent could do directly

- Anything where the parent will need the full output anyway

The cost trap: a subagent-heavy workflow can hit roughly 7× the token cost of a single-thread session because each subagent reloads system prompts and tool definitions. Reserve subagents for tasks where the verbose intermediate output is genuinely throwaway. If the parent is going to need the raw output, doing the work inline is cheaper.

Our Claude Code hooks, subagents, and skills guide covers the configuration in depth, including custom subagent definitions for repeated tasks.

Strategy 5: Audit your MCP servers, most aren’t worth their token tax

This is the most under-discussed lever. Modern Claude Code defers MCP tool definitions by default — only tool names enter context until Claude actually calls a specific tool — but the savings only materialise if you actually keep your server list lean. Real-world numbers from Anthropic’s own advanced tool use writeup:

- A five-server setup with 58 tools: ~55K tokens of overhead in the traditional preload model

- Jira MCP alone: ~17K tokens

- Anthropic’s own internal testing pre-optimization: 134K tokens of tool definitions

- With Tool Search enabled: 58 tools collapse to ~8.7K total context consumption, an ~84% reduction

That’s a third of your context window paid as tax before you’ve done anything if you’re on an older client without lazy loading. Even with deferred definitions, every connected MCP server still adds tool names to the system prompt and increases the surface area Claude has to reason about.

What to do, ranked by impact:

- Run

/contextto see your actual tool-definition spend. If you’re surprised, you’re losing money. - Disable MCP servers you don’t need this session. Most workflows touch 1–2 servers at a time. Run

/mcpto see configured servers and disable the rest. - Confirm Tool Search is active. In current Claude Code, MCP tool definitions are deferred by default; on older clients or direct SDK calls, enable Anthropic’s Tool Search explicitly.

- Prefer CLI tools over MCP servers where they exist.

gh,aws,gcloud, andsentry-cliadd zero per-tool listing overhead because Claude invokes them throughBashinstead of via tool schemas. - Cache the remaining tool definitions. They’re stable across turns, so they belong in the cached system prefix. This compounds with Strategy 1.

- Don’t connect MCP servers globally if you only need them in one repo. Use project-scoped settings.

A reasonable rule of thumb: if a tool’s definition is bigger than the tool actually does for you, it shouldn’t be loaded. The convenience of having every MCP server available “just in case” is paid for in every input token on every turn for the rest of the session.

Putting it together: a 60–90% target is realistic

The savings stack multiplicatively. A team that does all five (caching, compaction discipline, Sonnet-default routing, careful subagent use, and a slimmed MCP loadout) is comparing a $1,600 weekend bill to roughly $200–400 for the same work. The largest individual lever is usually #1 (caching) on long sessions or #3 (Sonnet-default) on short ones. The biggest hidden lever is #5 (MCP audit), because the tokens involved never appear in your conversation.

If you’re routing through ofox.ai instead of direct Anthropic, you get the cache-aware pricing automatically plus Opus and Sonnet at a discount to direct list price. Our complete guide to reducing AI API costs covers cross-provider patterns, and the Claude Code ofox configuration guide walks through the actual setup. For a cost-aware end-to-end stack, the $30/month AI coding stack guide shows the full pattern.

A subagent doesn’t make Claude Code cheaper. A subagent makes one specific kind of expensive work cheaper. An MCP audit makes everything cheaper. Start there if you want the biggest win in the shortest time.

Sources

- Anthropic Prompt Caching Documentation: cache pricing, TTL options, cache_control syntax

- Anthropic Claude API Pricing: Sonnet, Opus, Haiku base rates

- Claude Code GitHub Issue #46829: March 2026 TTL regression

- Anthropic Engineering: Advanced Tool Use: Tool Search and on-demand tool loading

- ofox.ai Models Catalog: current Claude pricing on the ofox gateway

- Claude Code Cost Management Docs: official optimization guidance