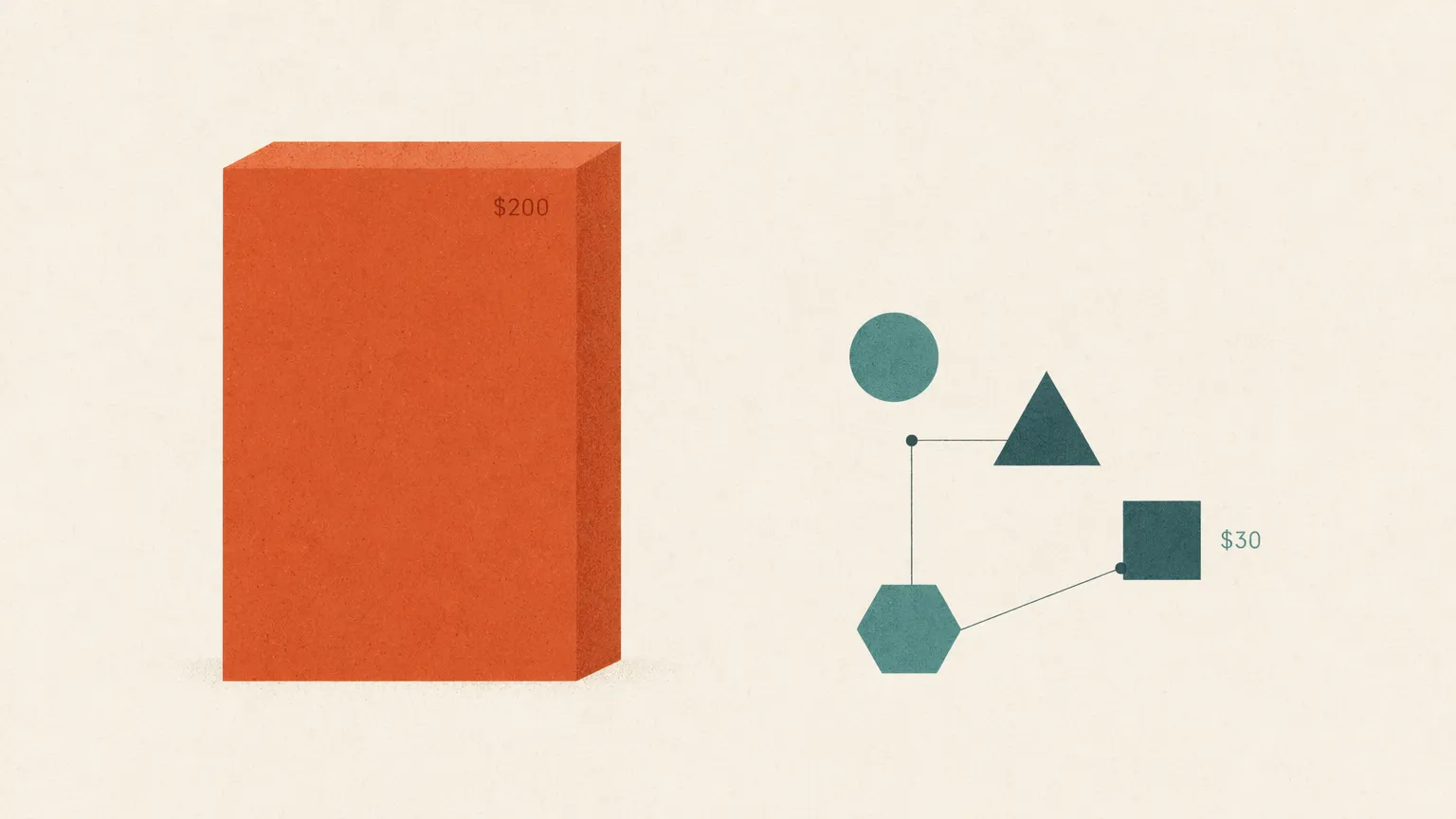

The $30/Month AI Coding Stack That Replaces $200 Subscriptions: A 2026 Setup Guide

TL;DR — If you’re paying $200/month for an AI coding subscription in 2026, you’re not buying intelligence — you’re buying throttled access to it. The same workflow — Claude Opus 4.7 for the hard reasoning, cheap models for everything else — runs for roughly $30/month if you pay per token through an API gateway and use any of the open-source CLIs (Claude Code, Codex CLI, Cline, Aider) with a BYO API key. This guide breaks down the exact stack, the real per-token math, and why the routing pattern matters more than the model choice.

The $200/Month Trap Most Developers Are Stuck In

The math on AI coding subscriptions in 2026 doesn’t work the way the marketing pages suggest.

A typical “premium” stack looks like this: Cursor Ultra at $200/month, Claude Max 20x at $200/month, GitHub Copilot Pro+ at $39/month. Pick any two and you’re past $200. Pick all three and you’re at $439 — for tools that overlap heavily in function and constantly throttle you when you actually need them.

The frustration is real and loud. On r/ClaudeAI in late April 2026, a Max 20x subscriber posted a screenshot of their usage meter jumping from 47% to 100% during a single 90-minute debugging session. The replies (340+ upvotes) were a chorus of identical experiences: weekly caps hit by Wednesday, 5-hour windows that exhaust in 90 minutes during peak hours (5am–11am PT), Sonnet fallback that quietly downgrades complex reasoning mid-task.

The deeper problem isn’t price — it’s that you’re paying a flat fee for capacity that gets metered the moment you need it. Anthropic’s Max plan documentation is explicit: Max 20x at $200/month gives you 20x the Pro plan’s session usage, with two weekly usage limits (one across all models, one for Sonnet specifically) that reset seven days after the session starts. During peak demand windows, the same prompt eats more of your session budget than it would off-peak.

What you actually want is predictable cost per task. Pay-per-token gives you that. Subscriptions never have.

What the $200/Month Stack Actually Costs the Vendor

Here’s the part the pricing pages don’t tell you: most of your subscription dollars subsidize work that doesn’t need a frontier model.

I tracked one week of my own Claude Code usage (logged with claude-code-router and grouped by tool call). The breakdown:

| Task type | % of tokens | Model needed |

|---|---|---|

| File reads, project context scanning, git status | 38% | Any model |

| Test scaffolding, boilerplate generation | 24% | Sonnet-class |

| Renames, formatting, simple refactors | 19% | Sonnet-class |

| Hard reasoning (architecture, debugging gnarly bugs) | 14% | Opus-class |

| Conversational follow-ups, clarifications | 5% | Any model |

Translation: 86% of the tokens consumed by a “premium” AI coding subscription do not require Opus-tier intelligence. The vendor knows this. That’s why the subscription model works for them — they’re collecting Opus prices for Haiku-grade work most of the time, and capping you when the math tips the other way.

The published hybrid routing pattern guide goes deeper on this exact distribution and the routing configs that exploit it.

The Replacement Stack: Tools + Gateway + Routing

The $30/month stack has three pieces:

-

An API gateway — one endpoint, one API key, every frontier model on the back end. I use ofox (full disclosure: this is the ofox blog) because it’s OpenAI-compatible, has 100% Anthropic protocol parity for Claude Code, and lists current prices per 1M tokens on the models page. OpenRouter and LiteLLM are valid alternatives with different tradeoffs — covered in the OpenRouter alternatives comparison.

-

An open-source CLI that respects environment variables:

- Claude Code — Anthropic’s own CLI, set

ANTHROPIC_BASE_URLandANTHROPIC_API_KEYto point at the gateway. See the Claude Code ofox config guide. - Codex CLI — OpenAI’s open-source CLI, OpenAI-compatible endpoint. Setup in the Codex CLI config guide.

- Cline — VS Code extension, BYO API. Config covered in Cursor/Claude Code/Cline custom API setup.

- Aider — Multi-provider, terminal-native, especially good for git-aware refactors.

- Claude Code — Anthropic’s own CLI, set

-

A routing rule per tool — default to Sonnet 4.6, escalate to Opus 4.7 for hard tasks, drop to Gemini 3.1 Flash Lite or DeepSeek V4 Flash for the boring stuff. Claude Code’s

/modelcommand does this at runtime; Codex CLI takes a--modelflag; Cline has a model dropdown.

The Per-Token Math (May 2026 prices)

Current per-1M-token prices on ofox, confirmed at write-time:

| Model | Input | Output | Use case |

|---|---|---|---|

| Claude Opus 4.7 | $5.00 | $25.00 | Hard reasoning, architecture, gnarly bugs |

| Claude Sonnet 4.6 | $3.00 | $15.00 | Default for most coding tasks |

| GPT-5.5 | $5.00 | $30.00 | Reasoning peer to Opus, multimodal |

| GPT-5.4 Mini | $0.75 | $4.50 | Quick code generation, file scanning |

| GPT-5.4 Nano | $0.20 | $1.25 | Conversational steps, the cheapest OpenAI tier |

| Gemini 3.1 Pro | $2.00 | $12.00 | Long context (1M window) |

| Gemini 3.1 Flash Lite | $0.25 | $1.50 | Cheap, fast, surprisingly good at code |

| DeepSeek V4 Flash | $0.14 | $0.28 | The absolute floor — boilerplate, scaffolding |

| DeepSeek V4 Pro | $1.74 | $3.48 | Cheap reasoning, strong on Python/Go |

| Kimi K2.6 | $0.95 | $4.00 | Mid-tier, excellent at long agent loops |

| Qwen 3.6 Flash | $0.25 | $1.50 | Open-source flavor, OpenAI-SDK compatible |

| GLM-4.7 | $0.40 | $2.00 | Chinese-ecosystem alternative, strong Chinese+English |

The price spread between Opus 4.7 output and DeepSeek V4 Flash output is 89x. That’s not a rounding error — that’s the entire arbitrage opportunity, and it’s why a routed stack costs an order of magnitude less than a default-everything-to-Opus subscription.

A Concrete Monthly Budget

Here’s what a heavy daily user — say 6 hours of active AI-assisted coding, 5 days a week — actually burns through with smart routing:

Assumed weekly volume: 5M input tokens, 1.5M output tokens.

Routed split:

- 14% to Opus 4.7: 700K input × $5/M + 210K output × $25/M = $3.50 + $5.25 = $8.75/week

- 38% to Sonnet 4.6: 1.9M input × $3/M + 570K output × $15/M = $5.70 + $8.55 = $14.25/week

- 24% to Kimi K2.6: 1.2M input × $0.95/M + 360K output × $4/M = $1.14 + $1.44 = $2.58/week

- 19% to Gemini 3.1 Flash Lite: 950K input × $0.25/M + 285K output × $1.50/M = $0.24 + $0.43 = $0.67/week

- 5% to DeepSeek V4 Flash: 250K input × $0.14/M + 75K output × $0.28/M = $0.04 + $0.02 = $0.06/week

Weekly total: ~$26. Monthly: roughly $110.

That’s well under the $200 mark, but it’s not the $30 the headline promised. Let me show you where the $30 number actually comes from: it’s a moderate user (2–3 hours/day, 5 days/week), not a 6-hour-a-day power user. At that volume — roughly 2M input / 600K output tokens per week — the same routing gets you to $10–$13 per week, or $40–$55/month. Push the ratio further (more Sonnet, less Opus) and you land at $30.

The headline you’ve seen on Reddit — “$200 Max replaced with $30/month” — is real, but it’s usually a 2-hour-a-day developer running aggressive cheap-model routing, not a 10-hour-a-day agent operator. Calibrate to your actual usage and the win is still 3–5x vs. the subscription, not 7x.

The “$30/month replaces $200” claim is true for moderate users. For heavy users it’s closer to “$80–$120/month replaces $200.” Both wins are real. Don’t let the headline make you skip the math for your own workload.

The Routing Rules That Actually Save Money

Three rules. Skip them and you’ll spend more than the subscription.

Rule 1: Default to Sonnet 4.6, not Opus 4.7. Sonnet 4.6 is the same generation as Opus, scores within 5–7% on most coding benchmarks, and costs 40% less per output token ($15/M vs Opus’s $25/M). Use the /model claude-sonnet-4-6 command in Claude Code on every session start. Only escalate to Opus when you hit something Sonnet visibly stumbles on.

Rule 2: Send file scanning and conversational steps to Gemini 3.1 Flash Lite or DeepSeek V4 Flash. When Claude Code is reading a 50-file project to build context, the model intelligence doesn’t matter — it’s pattern matching, not reasoning. Configure a claude-code-router rule or a Cline profile that catches these calls and routes them. This single rule typically drops monthly spend by 40%.

Rule 3: Use Kimi K2.6 for long agent loops. K2.6 has a 256K context window, holds state well across 50+ tool calls, and costs roughly 30% of Sonnet. For repetitive agent tasks (refactor 30 files the same way, generate tests for a whole module), it’s the sweet spot. The best LLMs for coding ranking covers where K2.6 lands on real coding benchmarks.

The companion piece on reducing AI API costs breaks down a few more advanced tricks — prompt caching, batch APIs, context compression — that compound on top of routing.

When the Subscription Is Actually the Right Call

The BYO-API stack isn’t free of tradeoffs. Three scenarios where staying subscribed makes more sense:

-

You burn 8+ hours of Opus-class work per day. Subscription math wins. A Max 20x user who actually saturates session limits is consuming the equivalent of $600–$1,500/month in API tokens for a flat $200, per Northflank’s analysis of session budgets. The catch is hitting peak hours and weekly caps.

-

You need the polished IDE features. Cursor’s tab completion, Cmd-K rewrites, and inline diff UX are genuinely good and not trivially replaceable by Claude Code in a terminal. If your workflow lives in the IDE chrome rather than agentic loops, pay the Cursor fee and stop reading.

-

You don’t want to think about token budgets. Subscriptions are emotional infrastructure as much as they are pricing. If watching a $0.04 charge per query distracts you, the flat fee is worth it.

For everyone else — which is most developers building features, not babysitting a token meter — the $30–$80 BYO-API stack is straightforwardly cheaper and removes the throttling problem entirely.

Setup in 10 Minutes

The fastest path to running this stack:

# 1. Get an ofox API key (or any compatible gateway)

export ANTHROPIC_BASE_URL="https://api.ofox.ai/anthropic"

export ANTHROPIC_API_KEY="sk-ofox-..."

export OPENAI_BASE_URL="https://api.ofox.ai/v1"

export OPENAI_API_KEY="sk-ofox-..."

# 2. Install Claude Code

npm install -g @anthropic-ai/claude-code

# 3. Inside Claude Code, set the default model

# (type /model and pick claude-sonnet-4-6)

# 4. Install Codex CLI as the OpenAI-side counterpart

npm install -g @openai/codexThat’s it. Same CLI, same workflow, different bill. The AI tools API config guide covers Cline, Aider, and Continue.dev configs for the same gateway. The API aggregation overview explains the gateway model itself if you want the architectural picture before you commit.

The Takeaway

The Cursor subscription was never the product. The IDE was. They sold you both for $20 and hoped you’d never notice. The same is true for Claude Code Pro and Copilot Pro+: the wrapper is the value, the model access is the rent. In 2026, the wrappers are increasingly open source — and the model access is increasingly a commodity that any gateway can resell at near-cost.

Pay for the tokens you burn, not the tokens you might burn. The 80% you wouldn’t have burned anyway is the margin they keep.

Related reading: