Why Claude Max Users Are Leaving in May 2026: A Data-Driven Look at the Throttling Backlash

TL;DR. Between March 23 and May 6, 2026, Claude Max subscribers watched their 5-hour sessions evaporate in 19 minutes, learned about two cache bugs that silently inflated token bills 10–20×, and discovered that Claude Code v2.1.100 burns roughly 40% more tokens than v2.1.98 (the immediately preceding release) for the same workload. Anthropic acknowledged the problem on March 26, shipped a partial reversal on May 6, and left weekly caps untouched. This is a post-mortem of the backlash, not a verdict on the plan.

The story of Claude Max in spring 2026 is not that the limits got worse — it is that nobody could tell whether the limits got worse, the model got worse, the tokenizer got worse, or the client got worse, because all four happened in the same six-week window.

What actually changed between March 23 and May 6

The throttling backlash starts on March 23, 2026, when Max 20x users began hitting daily limits in 19 minutes instead of the documented five hours. The first wave looked like a single bug. It turned out to be four overlapping changes hitting at once:

- Intentional peak-hour throttling during 05:00–11:00 PT and 13:00–19:00 GMT, confirmed by Anthropic on March 26.

- Two prompt-caching bugs silently inflating token bills 10–20× — tracked in

claude-codeissue #41930, with a March 31 source-code leak pointing at the attestation/anti-distillation pipeline as the proximate cause. - Expiration of the 2× off-peak usage promotion on March 28, which had been quietly subsidising heavy nighttime use.

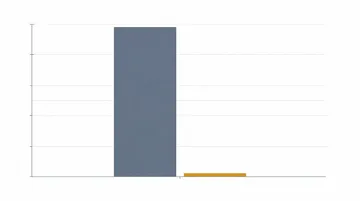

- Claude Code v2.1.100+ token inflation — source-code analysis comparing v2.1.98 vs. v2.1.100 measured 978 fewer bytes sent but 20,196 more tokens billed for the same workload, roughly a 40% client-side regression (GitHub issue #46917, writeup).

None of these were announced through a status page, a blog post, or an email. Anthropic’s March 31 acknowledgment read “limits are being consumed far faster than expected” and lived inside scattered Reddit comments and engineer X posts. The vacuum is what created the backlash, not the changes themselves.

Why this hit harder than the November 2025 limit cut

The March 23 incident hit harder because users could not tell whether they had hit a quota, a bug, or a downgrade. In November 2025, when Anthropic last tightened limits, the change was uniform and predictable — usage halved, the math worked, you upgraded or left. In March, a Max 20x user could send the same five prompts, hit 21% on prompt one, then jump to 100% on prompt two, with no way to audit which token counter was lying.

The compounding effect was geometric, not linear. A 10× cache-bug inflation on a 40% client-inflation baseline against a peak-hour 50% session reduction produces a session that ends seven times faster than the documentation says. That is not a quota problem — that is a trust problem.

The community response was correspondingly geometric. The r/ClaudeAI thread “20x max usage gone in 19 minutes” hit 330+ comments in 24 hours. The r/ClaudeCode thread “Claude Code Limits Were Silently Reduced and It’s MUCH Worse” hit 360+ comments over six days. r/Anthropic carried a parallel set of “is the model itself worse” threads that bled into the throttling discussion and made the two impossible to disentangle. GitHub claude-code issue #38335 collected the bug-report side; #41930 became the canonical cross-reference doc; #54714 documented the late-April Max 20x daily-limit tightening that arrived after the original incident was supposedly resolved.

What Anthropic was actually optimising for

Anthropic was solving a capacity problem, not a billing problem — and that one distinction reframes most of the backlash.

Inference capacity for frontier models has been the binding constraint on Anthropic’s growth since late 2025, when their own blog acknowledged compute pressure. Max 20x users are concentrated, technical, and run agents 24/7 — exactly the workload that turns “five hours of capacity per user per session” into a tragedy of the commons. Peak-hour throttling is the obvious lever from that angle: cap the 95th percentile so the median user keeps a working product.

The May 6 announcement reads like the company concluded the lever was the wrong shape. Two changes shipped simultaneously: the 5-hour limit doubled for Pro and Max, and peak-hour reductions for Pro and Max accounts were removed. The SpaceX compute deal announced the same day is the supply-side answer; it suggests the throttling was a stopgap until new capacity landed. The weekly cap, notably, did not move — which means the company is comfortable with heavy users finishing the week early, just not with them finishing the day in 19 minutes.

The unanswered question is the cache bugs. A capacity squeeze explains throttling. It does not explain a 10–20× billing inflation, and it does not explain a 40% client-side regression in v2.1.100. Those read more like a release-train problem: shipping fast, on top of a tokenizer/attestation rewrite, with insufficient telemetry on per-request token counts. Anthropic’s April 23 post-mortem on quality regressions hints in this direction without making the connection explicit.

Opus 4.6 versus 4.7 is the part nobody wants to call

This section deliberately does not pick a winner, because the community is not aligned on what the comparison even measures. The facts that are well-sourced:

- Opus 4.7 ships with a new tokenizer that produces up to ~35% more tokens for the same input text. That alone makes any “Max plan is unchanged” claim hollow if you are actually using 4.7 — your weekly cap shrinks proportionally even if the cap number is identical.

- Opus 4.6 was silently removed from the Claude Desktop Code tab model picker after the 4.7 release, filed as

claude-codeissue #49689 and discussed at length on Hacker News (47861009). - A Reddit post titled “Opus 4.7 is not an upgrade but a serious regression” reached ~2,300 upvotes within 48 hours, with the modal complaint being not raw quality but predictability — 4.7 felt “more confidently wrong” and required more re-prompting.

- Opus 4.6 is scheduled for deprecation on June 15, 2026 (Anthropic deprecation page), which compresses the choice window.

Other engineers report the opposite — that 4.7 is meaningfully stronger on long-horizon agentic tasks and that the regression complaints are sampling bias from users whose prompts were tuned to 4.6’s quirks. GitHub Changelog’s coverage and Anthropic’s own 4.7 page both lead with agentic improvements, and that benchmark holds up in independent runs.

What’s true is that the version question and the throttling question got entangled. If your usage doubled in week 1 of 4.7 because of the tokenizer alone, you can’t separate “I burned through Max” from “Anthropic changed the deal.” That entanglement, more than either fact alone, is what made spring 2026 feel like a downgrade for Max users.

What “leaving Max” actually looked like in practice

Most users did not cancel — they routed around the limits. Three patterns dominate in the GitHub and Reddit threads:

- Pin the client. The most-cited workaround through April was pinning Claude Code to v2.1.98 — the release immediately preceding the regression — via

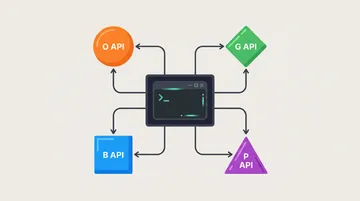

npx [email protected], accepting that some new features wouldn’t ship until Anthropic fixed the v2.1.100+ regression. - Hybrid routing. Run Claude Code with Claude on critical paths and a cheaper backend (DeepSeek V4 Pro, Kimi 2.6, Gemini 3.1 Pro) on the 60–80% of calls that don’t need flagship reasoning. The hybrid routing pattern writeup is the canonical reference.

- Switch the backend. The more aggressive path: replace Claude entirely on a per-session basis. The Claude Code backend switch tutorial and the DeepSeek V4 in Claude Code real-cost comparison document the numbers from users who ran 100M-token tests over four weeks.

Cancellations happened too — the r/ClaudeCode “burned $6000” thread is the most-cited example of a heavy user walking away. But the modal response in the Max 20x cohort was hybridisation, not exit. That distinction matters for anyone trying to read the May 6 reversal: Anthropic appears to have moved before the cancellation curve actually bent.

So is Max worth it after May 6?

It depends on whether your usage is bursty or sustained. The May 6 changes — doubled 5-hour limits, removed peak-hour reductions — directly help users whose work clusters into intense multi-hour sessions during business hours. Those users probably get back to a working plan.

If your work is sustained — long agentic runs, batched evals, codebase-wide refactors — the binding constraint is the weekly cap, which did not change. You will still hit the wall, just on Friday instead of Tuesday. For that profile, the API path through a unified gateway plus selective Opus 4.6/4.7 calls is structurally cheaper than $200/month Max, and was already cheaper before the throttling started.

If you’re running Max because the integrated Claude Code experience is what you actually want, the May 6 reversal probably restores the value. If you were running Max because the per-token math worked out, run the math again — between the new tokenizer, the v2.1.100 regression, and a weekly cap that hasn’t moved, the math may have changed even where the price tag didn’t.

Anthropic shipped four breaking changes in six weeks, communicated none of them through a status page, and reversed the most visible one only after a 2,300-upvote Reddit post and 700+ aggregated GitHub-issue comments — that is the part that matters, not whether $200/month is the right number.

Sources and further reading

- Anthropic — Higher usage limits and SpaceX compute deal (May 6, 2026)

- The Register — Anthropic tweaks Claude usage limits (March 26)

- The Register — Anthropic admits Claude Code quotas running out too fast (March 31)

- Anthropic Engineering — April 23 post-mortem on quality regressions

- GitHub

anthropics/claude-code#38335 — original Max 20x bug report - GitHub

anthropics/claude-code#41930 — canonical cross-reference of all four root causes - GitHub

anthropics/claude-code#49689 — Opus 4.6 silently removed from Code tab picker - GitHub

anthropics/claude-code#54714 — Max 20x late-April daily-limit tightening - Hacker News 47861009 — “Why was Opus 4.6 silently removed?”

- Hacker News 47936579 — “Is it just me or is Claude Code getting worse?”

- Awesome Agents — Claude Code Silently Burns 40% More Tokens Since v2.1.100

- VentureBeat — Anthropic reveals harness/operating-instruction changes likely caused degradation

- Anthropic — Model deprecations (Opus 4.6 sunset June 15, 2026)