Qwen 3.6 Plus vs DeepSeek V4 Pro for Coding: Open-Weight API Showdown (3 Tasks, Real Cost)

TL;DR — Qwen 3.6 Plus (78.8% SWE-bench Verified) and DeepSeek V4 Pro (80.6%) finished within two points of each other on the headline coding benchmark, but they fail differently on real work. V4 Pro is faster, cheaper during its launch promo, and stronger on bounded one-shot tasks. Qwen 3.6 Plus’s always-on reasoning catches edge cases V4 Pro skips and holds context coherence past 200K tokens where V4 Pro starts to drift. We ran both through three concrete coding tasks — algorithmic implementation, multi-file refactor, long-context bug triage — and the right model changes for each. The honest answer is to route by task type, not pick one and stop thinking.

The “open-weight model good enough for coding” question used to mean DeepSeek versus everything else. As of May 2026, it means DeepSeek versus Alibaba, with a 1.8-point gap on SWE-bench Verified that is well within run variance. Both ship 1M-token context, both expose OpenAI-compatible tool calling, both are cheaper than Claude Opus by an order of magnitude or more.

The interesting question isn’t who wins the benchmark. It’s where each one breaks.

Pricing and Architecture: What Actually Differs

Before the tasks, the numbers that decide whether either model belongs in your stack:

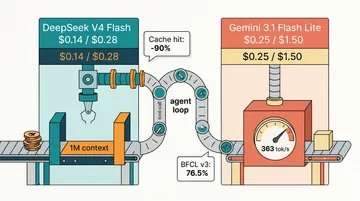

| Model | Input (list) | Output (list) | Context | Parameters | Released |

|---|---|---|---|---|---|

| Qwen 3.6 Plus (ofox) | $0.50/M | $3.00/M | 1M | Linear-attention MoE, reasoning-by-default | 2026-04-02 |

| DeepSeek V4 Pro (direct) | $1.74/M | $3.48/M | 1M | 1.6T total / 49B active MoE, MIT license | 2026-04-24 |

| DeepSeek V4 Pro (launch promo, ends 2026-05-31) | $0.435/M | $0.87/M | 1M | — | — |

Sources: DeepSeek API pricing (verified 2026-05-15), ofox.ai model catalog, Hugging Face V4 Pro card.

Two pricing details that change the read on this table. V4 Pro’s launch promo expires May 31, after which both input and output prices jump 4x. Anyone budgeting against the promo numbers is in for a rude June 1. And Qwen 3.6 Plus’s input price of $0.50/M actually undercuts DeepSeek’s post-promo rate; its output at $3.00/M is below V4 Pro’s post-promo $3.48/M. For workloads that outlast the promo window, the cost gap closes substantially.

The architectural difference matters more than the price gap. V4 Pro is a sparse MoE — it routes each token through 49B of active parameters out of a 1.6T total. Qwen 3.6 Plus uses linear attention combined with always-on chain-of-thought, meaning every response carries a reasoning_content field whether you asked for it or not. You pay output-token cost for that reasoning. On a task that needs careful thought, this is an asset. On boilerplate, you’re paying for ceremony.

For the broader DeepSeek family cost picture, see our DeepSeek API pricing breakdown. For the cost-quality tradeoff inside the V4 family alone, the V4 Pro vs Flash deep dive covers when Pro is overkill. And for Qwen 3.6 Plus access and benchmarks in isolation, the Qwen 3.6 Plus complete guide has the model-ID-and-curl walkthrough.

Task 1: Algorithmic Implementation with Edge Cases

First task is the kind developers reach for when sniff-testing a coding model: implement this function, with these constraints. We used a sliding-window string algorithm with three non-obvious edge cases stated in the prompt: empty input, single-character input, and an off-by-one boundary on the window size.

V4 Pro produced clean, idiomatic code in about 8 seconds. It handled the empty-input case correctly. It missed the single-character edge case on the first pass — the function returned an incorrect result instead of the spec’d value. A clarifying follow-up prompt fixed it.

Qwen 3.6 Plus took longer — 14 seconds with reasoning trace — and produced code that handled all three edge cases on the first pass. The reasoning trace explicitly enumerated the boundary conditions before writing the implementation. The code itself was slightly less elegant than V4 Pro’s first attempt — one extra variable, one redundant length check that the optimizer would catch — but it was correct without iteration.

The pattern we kept seeing across algorithmic tasks: V4 Pro is faster and produces more idiomatic-looking code on the first pass, but it skips edge cases more often than Qwen 3.6 Plus does. The reasoning trace in Qwen’s output isn’t cosmetic — it forces the model to walk the boundary conditions before committing, and on this category of task that consistently catches things V4 Pro drops.

Cost on this task. A 2,500-input / 800-output token request runs about $0.0035 on V4 Pro (promo) or $0.0103 on V4 Pro (list), versus $0.0037 on Qwen 3.6 Plus. The output-token cost of Qwen’s reasoning trace is real — call it 1,500 reasoning tokens on top of the 800-token answer, so roughly $0.0045 total. The difference is real, but on a single task it’s noise. The difference shows up at 10,000 calls per month, not at 10.

The pull rule for this category. If you can run a single follow-up prompt to fix missed edge cases without disrupting your pipeline, V4 Pro is cheaper and faster. If your pipeline can’t tolerate a wrong-on-first-pass answer — for example, an unattended agent that commits the diff — pay Qwen’s reasoning premium.

Task 2: Multi-File Refactor with Cross-References

Second task separates models that know syntax from models that hold a codebase in working memory. We gave both models four files (a TypeScript service, two consumers, and a test file) and asked them to rename a method, change its signature to take an options object instead of positional args, update both call sites, and update the test mocks to match.

The prompt fit in ~12K tokens. Both models had plenty of headroom on context. Both produced output that, on a quick read, looked correct.

V4 Pro got the rename right in the service file, updated the first consumer correctly, and missed an option default in the second consumer — it passed {} where the original code had been passing a specific default value as a positional argument. The test file was updated correctly. The bug would manifest only when the second consumer was called in a specific code path, which the tests didn’t cover. Quiet semantic drift, not a syntax error.

Qwen 3.6 Plus caught the default value. The reasoning trace explicitly noted “consumer B passes defaultPolicy as the second positional argument; in the options-object form this should be { policy: defaultPolicy }.” It also flagged that the test file’s mock setup needed an extra assertion to verify the new signature, which V4 Pro hadn’t mentioned.

The win for Qwen on this task isn’t about code quality — both models produced syntactically valid output. It’s about unstated invariants. Multi-file refactors usually have invariants the prompt doesn’t state explicitly: default values, ordering assumptions, error handling conventions that hold across the codebase. V4 Pro tends to capture the explicit instructions and let the implicit ones slip. Qwen’s always-on reasoning steps surface the invariants and address them.

This is the same failure mode the DeepSeek V4 Pro vs Flash comparison flagged for Flash on long-file refactors — except here V4 Pro is the one missing the subtle invariant. The gap between Pro and Flash on consistency narrows when the task fits in 12K tokens and the difficulty isn’t context length but reasoning depth.

Cost on this task. The full prompt + output ran around 12K input / 3K output tokens. V4 Pro: $0.031 (list) / $0.008 (promo). Qwen 3.6 Plus with reasoning: $0.018. Qwen wins on cost at list pricing, loses by a hair under the promo, and wins on correctness on the first pass either way.

The pull rule. Multi-file refactors where the prompt can’t enumerate every invariant: Qwen 3.6 Plus. The reasoning trace is buying you something concrete on this category — it’s not theater.

Task 3: Long-Context Bug Triage (200K-Token Repo Snapshot)

Third task pushes context length. We loaded around 200K tokens of an open-source codebase — three top-level directories, roughly 80 files — into the prompt and asked: find the root cause of this stack trace. The trace pointed at a generic error path; the actual cause was three calls deep, in a file the trace didn’t name directly.

Both models have 1M-token context windows on paper. The question is how they perform at the upper end of the input length, not whether they accept it.

V4 Pro read the stack trace, identified the immediate calling function, examined that file, and concluded the bug was in the immediate caller. It was wrong — that caller was passing valid data; the bug was one level deeper, in a transformation step that was silently mutating an array. V4 Pro’s response was confident and specific, with a proposed fix that would have suppressed the symptom without addressing the cause. On a follow-up prompt (“walk three levels deeper”), it found the real bug.

Qwen 3.6 Plus spent its reasoning budget on tracing the data flow rather than the call stack. It read the trace, then started backward from where the bad value would have originated, working through each transformation. It correctly identified the silent mutation on the first pass. The reasoning trace was 4,000 tokens long. The answer was correct.

The interesting observation across long-context tasks: V4 Pro at 200K-token input length stays coherent on syntactic understanding but loses some accuracy on causal chains. Qwen 3.6 Plus is slower and more expensive at that input length (because reasoning tokens scale roughly with input complexity) but produces noticeably better cause-and-effect traces.

This is consistent with what independent reviewers have reported. Artificial Analysis’s intelligence-index methodology ranks Qwen 3.6 Plus at 50 on its composite score versus a 35 median for comparable price-tier reasoning models — and the gap is largest on tasks that reward reasoning depth over raw throughput. The BenchLM V4 Pro report shows the inverse: V4 Pro wins on throughput-sensitive benchmarks and on shorter-context coding work.

Cost on this task. 200K input + 4K output (V4 Pro) or 200K input + 4K answer + 4K reasoning (Qwen). V4 Pro at list: $0.362. V4 Pro at promo: $0.090. Qwen 3.6 Plus: $0.124. Qwen wins on cost at list, loses to the promo, and is the only one that got the answer right on the first pass.

The pull rule. Long-context bug triage and “explain what this codebase does” tasks: Qwen 3.6 Plus. V4 Pro is faster, but on causal reasoning over large inputs the speed isn’t useful if you have to re-prompt.

What the Aggregate Picture Looks Like

Across the three tasks, the win count is split:

- Task 1 (algorithmic edge cases): Tie on correctness after one round of follow-up; Qwen wins on first-pass correctness. V4 Pro wins on speed and on cost-at-promo.

- Task 2 (multi-file refactor): Qwen wins on correctness. V4 Pro wins on cost at promo pricing only.

- Task 3 (long-context triage): Qwen wins on correctness. V4 Pro wins on speed and on cost at promo pricing.

If you flatten this into a single ranking, you’d say Qwen 3.6 Plus is the more careful model and V4 Pro is the faster one. That’s roughly correct, but it loses the structure of the result. The actual takeaway is that the answer depends on what’s already in your prompt:

- If the prompt enumerates every edge case and invariant explicitly: V4 Pro produces the cleaner first-pass output and is faster.

- If the prompt is exploratory or relies on implicit knowledge: Qwen 3.6 Plus’s reasoning step catches the gaps V4 Pro misses.

Most production prompts are somewhere in the middle. The honest answer is that running both behind a router — bounded one-shots to V4 Pro, exploratory or multi-step to Qwen 3.6 Plus — captures the strengths of each without the failure modes. For how teams are wiring this kind of routing into Claude Code and similar setups, the hybrid routing pattern guide covers the concrete implementation.

For the wider 2026 picture on coding model selection, our best LLM for coding ranked by real use places both models in the broader field, and the LLM API selection decision matrix maps the model-by-task-type axis for the full catalog.

The Promo-Window Decision

A meaningful subset of this comparison evaporates on June 1, 2026, when DeepSeek’s launch promo ends and V4 Pro’s price reverts to $1.74 / $3.48 per million. Three concrete decisions worth flagging:

- If you’re heavy on Task 1-style workloads (bounded algorithmic code) and you’re already using V4 Pro at promo: budget for the 4x cost increase on June 1, or build a router that downshifts bounded tasks to V4 Flash. The V4 Pro vs Flash piece covers where that line should sit.

- If you’re heavy on Task 2-style workloads (multi-file refactors with implicit invariants): Qwen 3.6 Plus is already the right call on correctness. After June 1 it becomes the right call on cost as well.

- If you’re heavy on Task 3-style workloads (long-context exploration): Qwen 3.6 Plus is the right call regardless of promo timing. The cost advantage of V4 Pro under the promo doesn’t survive the re-prompts you’ll need.

The broader pattern: V4 Pro’s promo pricing is a sales tactic, not a steady-state economic claim. If you’re modeling your token budget for the rest of 2026, use the list price, not the discount.

Access Both Through One Key

Both models are exposed through ofox.ai with OpenAI-compatible endpoints. The model IDs:

- Qwen 3.6 Plus:

bailian/qwen3.6-plus - DeepSeek V4 Pro:

deepseek/deepseek-v4-pro

from openai import OpenAI

client = OpenAI(base_url="https://api.ofox.ai/v1", api_key="$OFOX_API_KEY")

resp = client.chat.completions.create(

model="bailian/qwen3.6-plus", # or "deepseek/deepseek-v4-pro"

messages=[{"role": "user", "content": "..."}],

)The routing logic — which model gets which task — lives in your code, not in your billing setup. One key, two models, swap by changing the model string. For the full gateway setup story, see AI API aggregation: access every model through one endpoint. For cost-reduction tactics that compound on top of the model pick, how to reduce AI API costs covers caching, batching, and routing patterns that apply to both.

The takeaway worth sharing: these two models are close enough on benchmarks that the right pick is “both, behind a router” — anyone who tells you they’ve definitively picked a winner is overfitting to one task type. Build the router once, and the open-weight coding decision becomes load-balancing rather than vendor lock-in.

References

- DeepSeek V4 Pro pricing and release notes: api-docs.deepseek.com/quick_start/pricing (verified 2026-05-15)

- DeepSeek V4 Pro model card: huggingface.co/deepseek-ai/DeepSeek-V4-Pro

- DeepSeek V4 Pro review and SWE-bench data: Codersera V4 Pro review

- Qwen 3.6 Plus model details: Qwen blog 3.6 release

- Independent benchmarks: Artificial Analysis intelligence index, BenchLM V4 Pro page

- Cross-vendor coding comparison: LLM Coding Benchmark May 2026 (AkitaOnRails)

- ofox model catalog: ofox.ai/llms.txt