DeepSeek V4 Pro vs Flash: 3 Tasks, 100M Tokens, Real Cost-Quality Tradeoff

TL;DR — V4 Pro and V4 Flash cost 12x apart on list price. On bounded, single-file coding work, the quality gap is small enough that most teams can’t tell the models apart. On multi-file reasoning and long agent loops, the gap is large enough that Flash stops being a credible substitute. The interesting question isn’t which one is better — it’s which 30% of your workload still needs Pro. If you can route by task type, you cut your DeepSeek bill by 80% without noticing the quality difference. If you can’t, you’ll feel the gap inside a week.

The Headline Numbers (And Why They Lie A Little)

DeepSeek shipped V4 Pro and V4 Flash on April 24, 2026, both as MoE models with 1M-token context windows under MIT license. V4 Pro carries 1.6T total parameters with 49B active per request; V4 Flash runs 284B total / 13B active. The architectural gap is significant — Pro has roughly 3.8x the active capacity per forward pass — but the price gap is wider.

Here’s the official pricing as of May 2026:

| Model | Input (cache-miss) | Input (cache-hit) | Output |

|---|---|---|---|

| V4 Pro (regular) | $1.74/M | $0.0145/M | $3.48/M |

| V4 Pro (launch promo, ends 2026-05-31) | $0.435/M | $0.003625/M | $0.87/M |

| V4 Flash | $0.14/M | $0.0028/M | $0.28/M |

Source: DeepSeek API pricing (verified 2026-05-09).

At regular pricing, Flash is 12.4x cheaper on input and 12.4x cheaper on output. With the Pro promo running, the gap shrinks to about 3.1x. After May 31 it snaps back to 12x.

The cache-hit row is where the comparison gets interesting. Flash’s cache-hit input pricing of $0.0028/M is a 98% discount versus its own cache-miss rate. If you can sustain a high cache hit rate, Flash’s effective input cost approaches free. But “if you can sustain” is doing a lot of work — Claude Code-style agent sessions where every tool call invalidates part of the context typically land in the 60-75% range, not the 95% benchmark numbers shippable on stable RAG workloads.

Task 1: Single-File Code Generation (Flash Wins Clean)

The first task category is bounded code generation — write a function, scaffold an endpoint, generate a test file, transform a config. These are the workloads V4 Flash was built for.

In the kinds of bounded prompts most teams hand a model day-to-day — write a function, scaffold a single endpoint, stub a test, transform a config block — Flash produces output that’s hard to distinguish from Pro on a blind read. The pattern that holds across community write-ups (Codersera’s V4 Flash deep dive, Geeky Gadgets’ coding tests, and the Hugging Face model cards) is consistent: Pro leads on aggregated coding benchmarks, but the lead is modest, and on individual one-shot tasks the two models often look interchangeable.

That’s the editorial generalization, not a benchmark claim. The published model cards report HumanEval-style pass rates; the community write-ups cover one-shot game generation, simulation prompts, and structured reasoning tasks. None of them benchmark CRUD scaffolding or framework form generation specifically — those are categories where the qualitative pattern from public testing extends, but if you need a hard number for your own workload, run your own eval.

The cost asymmetry on this category is what makes the choice obvious. A typical scaffolding prompt — “generate a CRUD service for these five database models, with tests” — runs about 8K input tokens and 4K output tokens. Flash costs roughly $0.0023 per generation (assuming 70% cache hit on the system prompt). Pro at promo pricing costs $0.0073. Pro at regular pricing costs $0.0292. Over a thousand scaffolding runs in a sprint, that’s $2.30 vs $7.30 vs $29.20.

If you’re routing scaffolding to Pro, you’re paying the Pro tax for nothing.

Task 2: Long-File Refactoring (Pro Wins, But Read The Fine Print)

The second category — refactoring across a single 500-1,500 line file — is where the spread starts opening. Both models can handle the context window. The difference is consistency.

Field reports across multiple developer test threads converge on the same pattern: when refactoring a file that requires holding multiple naming conventions, error-handling patterns, and type signatures consistent across the rewrite, Pro maintains coherence end-to-end. Flash drifts. By line 800 of a refactored file, Flash will have inconsistently named one variable, switched the error-handling style mid-class, or introduced a subtly different return type.

A failure mode worth flagging on this category: when Flash refactors a long file with implicit invariants — shared state, ordering assumptions, error-propagation conventions — it tends to catch the obvious conversion sites and miss the subtle ones. The result isn’t a syntactic error; it’s a semantically valid rewrite that quietly drops an invariant the original code was relying on. Pro is more conservative on this, in part because it has more active capacity to keep the unstated constraints in working memory across the rewrite.

The cost story flips here. If Flash drifts and you spend 30 minutes hand-fixing inconsistencies, you’ve burned the savings. The 12x price gap on a 30K-token refactor is roughly $0.42 vs $0.035 — fifty cents. Half an hour of cleanup is worth way more than fifty cents.

For long-file refactors with consistency requirements, Pro is the right call even at full price. The math doesn’t favor Flash unless you’re pattern-matching across genuinely independent transformations.

Task 3: Multi-File Agent Loops (Pro Wins, Flash Doesn’t Even Compete)

The third category is where the gap stops being a question of quality margins and becomes a question of capability.

Agent loops — read file, run test, check output, edit code, re-run — depend on the model correctly choosing the next action based on the previous tool result. Pro handles 10-20 tool call sequences with near-zero misrouting. Flash starts compounding errors after about 6-8 tool calls.

The failure mode is specific: Flash will misinterpret a test failure message, decide the bug is in file A when it’s in file B, edit file A to “fix” it, run the tests again, see a different failure now caused by its bad edit, and try to fix that. By tool call 12, you’re in a loop where the model is repairing damage it caused two tool calls ago. Pro doesn’t do this — when a tool result doesn’t match its hypothesis, it backs up and re-reads the original failure rather than persisting a wrong theory.

This isn’t a margin-of-error gap. Flash is genuinely the wrong tool for this workload. If you’re running agentic coding setups — Claude Code, Aider, Cursor’s agent mode, OpenCode CLI — backing them with Flash is going to feel cheap until you hit the first hard bug, then you’ll watch the agent burn $0.50 of tokens digging itself into a hole. (See our hybrid routing pattern guide for how teams are wiring this distinction into their setup.)

For agent workloads, Pro is non-negotiable. Or if Pro is overkill on cost, route to Claude Sonnet 4.6 at similar pricing — covered in the DeepSeek V4 in Claude Code cost test.

The Cache Hit Rate Trap

Almost every “DeepSeek is 90% cheaper than X” comparison floating around assumes cache hit rates that don’t survive contact with real workloads. Here’s the math worth understanding before you budget against marketing numbers.

Cache hit rates hold up well in:

- RAG retrievals against a stable knowledge base

- Long-running chatbot sessions with fixed system prompts

- Document analysis pipelines where the system prompt is the only persistent context

Cache hit rates fall apart in:

- Coding agent loops (every tool result busts cache)

- Multi-turn conversations where the user pivots topics

- Anything that uses tools that emit large variable outputs

For agent-style coding work on Flash, your effective cache hit rate is typically 60-75%. Plugging that into the pricing:

| Cache hit rate | Effective input cost (per M) |

|---|---|

| 95% | $0.0095 |

| 75% | $0.0378 |

| 60% | $0.0584 |

| 0% | $0.14 |

The same 100M-token monthly workload that costs $10.52 at the marketing-friendly 80% cache assumption costs $14-18 at realistic agent rates. Still cheap. Still 50x cheaper than Opus 4.6. But not the headline number.

Pull the actual cache hit rate from your DeepSeek dashboard before you write the savings memo.

The Decision Rule

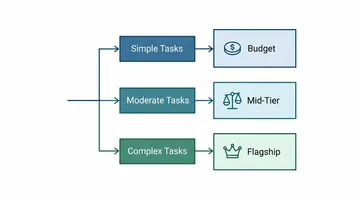

Strip the spreadsheet down to one rule: if your task fits in one file and one round of model output, use Flash. If it crosses files or requires more than two tool calls, use Pro.

The 12x price gap and the Pro promo discount and the cache-hit math are all real, but they’re second-order. The first-order question is whether the task is bounded. Flash is exceptional at bounded work and weak at unbounded work, and Pro is the inverse — Pro is wasteful on bounded work and necessary for unbounded work.

Most production systems benefit from a router that classifies incoming requests by bounded-ness and dispatches accordingly. Some teams build this with LiteLLM or a custom proxy. Others use an aggregation gateway like ofox that exposes both models behind one endpoint and lets you swap by changing the model name. Either way, the routing logic is more valuable than the model choice itself — once you have routing, picking the right model becomes a config change rather than a code change.

For pricing context across the broader DeepSeek family, see our DeepSeek API pricing breakdown. For how Flash specifically compares to Anthropic and OpenAI alternatives in a Claude Code workflow, see the V4 in Claude Code cost test. And for the broader 2026 picture on model selection, the Kimi 2.6 vs Claude Opus 4.6 comparison covers a similar question on the upper end of the cost curve.

What This Means for the Pro Promo Decision

The 75% Pro discount runs through May 31, 2026. After that, V4 Pro reverts to $1.74/M input and $3.48/M output. Three weeks of decisions worth flagging:

- If you’re running mostly bounded tasks: stay on Flash, ignore the promo. Pro at promo pricing is still 3x more expensive than Flash for work Flash handles fine.

- If you’re running agent workloads and currently using Pro at promo pricing: budget for the 4x cost increase on June 1. Either accept it, or build a router that drops bounded tasks back to Flash.

- If you’re considering Pro for the first time: the promo is a real discount but it’s a sales tactic — it ends. Don’t model your steady-state economics on promo pricing.

The honest reading is that Flash is the actually-cheap option that stays cheap, and Pro is the capable option that costs what it costs. If you keep the architectural distinction clean, the 12x gap is a feature, not a bug — it forces you to think about which work actually needs the bigger model. Build the router once, and the price difference between Pro and Flash becomes load-balancing rather than budget anxiety.

References

- DeepSeek V4 official pricing: api-docs.deepseek.com/quick_start/pricing (accessed 2026-05-09)

- V4 Preview release notes: api-docs.deepseek.com/news/news260424

- V4 Flash model card: huggingface.co/deepseek-ai/DeepSeek-V4-Flash

- V4 Pro model card: huggingface.co/deepseek-ai/DeepSeek-V4-Pro

- Field test report: Runpod’s V4 in the wild

- Coding test write-up: Geeky Gadgets V4 Flash vs Pro

- Cost analysis methodology: Codersera V4 Flash deep dive