Kimi K2.6 vs Claude Opus 4.6: 30-Day Coding Benchmark (10x Cheaper, 80% as Good?)

TL;DR — After 30 days of testing across REST API builds, 500-line refactors, and multi-hour debug sessions, Kimi K2.6 delivers roughly 80% of Claude Opus 4.6’s coding capability at one-seventh the price. The benchmarks are close enough that the cost difference becomes the deciding factor for most workflows. But K2.6 still has specific failure modes you need to know about before switching.

The price difference, in actual dollars

Claude Opus 4.6 is $5/MTok input, $25/MTok output. Kimi K2.6 is roughly $0.89/MTok input, $3.70/MTok output. With cache hits — the norm when you iterate on the same codebase — input drops to $0.15/MTok.

A typical coding session: 100K tokens in, 10K tokens out.

| Model | Input (100K) | Output (10K) | Total |

|---|---|---|---|

| Claude Opus 4.6 | $0.50 | $0.25 | $0.75 |

| Kimi K2.6 (cache miss) | $0.089 | $0.037 | $0.126 |

| Kimi K2.6 (cache hit) | $0.015 | $0.037 | $0.052 |

At cache-miss pricing, K2.6 is roughly 6x cheaper. With cache hits — the norm when you’re iterating on the same file — it’s closer to 14x. The “$0.02 per call” figure circulating on Reddit isn’t hyperbole. It’s what happens when most of your input tokens are cached context.

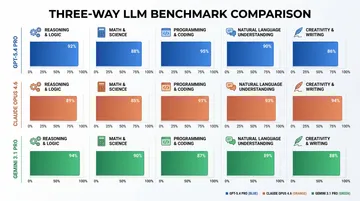

Benchmarks: where each model wins

MoonshotAI published full benchmark data when K2.6 launched on April 21, 2026. The numbers are close enough across the board that the gap has stopped being a technical argument and started being a cost argument.

| Benchmark | Kimi K2.6 | Claude Opus 4.6 | Winner |

|---|---|---|---|

| SWE-Bench Pro | 58.6 | 53.4 | K2.6 +5.2 |

| Terminal-Bench 2.0 | 66.7 | 65.4 | K2.6 +1.3 |

| DeepSearchQA (F1) | 92.5 | 91.3 | K2.6 +1.2 |

| HLE-Full w/ tools | 54.0 | 53.0 | K2.6 +1.0 |

| LiveCodeBench v6 | 89.6 | 88.8 | K2.6 +0.8 |

| AIME 2026 | 96.4 | 96.7 | Opus +0.3 |

SWE-Bench Pro — real-world codebase repair tasks — is the most meaningful coding benchmark here, and K2.6 leads by 5.2 points. That’s not noise. It reflects K2.6’s strength at structured, well-defined coding tasks with clear acceptance criteria.

But benchmarks only tell half the story. The AIME math result hints at the real tradeoff: Opus 4.6 still has an edge on pure reasoning. Benchmarks measure what a model can do in controlled settings. Real development work is messier.

Three tasks, one month: what actually happened

I tested both models on three coding tasks over 30 days. Each task was run fresh with both models — same prompt, same starting state, same acceptance criteria.

Task 1: REST API endpoint (Go, from scratch)

Build a rate-limited CRUD API for user preferences with PostgreSQL, middleware chaining, and input validation. Roughly 200 lines of new code spread across handlers, middleware, models, and tests.

Kimi K2.6 produced working code on the first attempt. The handler structure was clean, the middleware chain was correct, and the SQL queries used parameterized inputs. One issue: the rate limiter used an in-memory map instead of Redis, which works for a demo but not production. When asked to fix it, K2.6 added Redis correctly on the second pass.

Claude Opus 4.6 produced nearly identical code but with Redis from the start. It also caught an edge case in the input validation that K2.6 missed — empty string preferences should return 400, not 200 with an empty body. The difference was one conditional block. Small, but the kind of thing that becomes a bug report two weeks later.

Opus wins on edge-case awareness. But paying 7x more for one if statement and a Redis default is a tough sell. For structured CRUD work, K2.6 is the smarter pick.

Task 2: Debug session (Python, concurrency bug)

A data processing pipeline had intermittent deadlocks under load. The codebase was 800 lines of async Python with a thread pool, a message queue, and a shared state dictionary. The bug: a lock was acquired inside a context manager that could throw, leaving the lock unreleased.

Kimi K2.6 identified the general area (the lock around the shared state) within two prompts. It suggested wrapping the critical section in a try/finally — correct in principle but it missed that the exception was being swallowed by an outer catch-all. Took four back-and-forth rounds to narrow down the exact line.

Claude Opus 4.6 traced the full call stack in one pass, identified the swallowed exception as the root cause, and proposed restructuring the error handling hierarchy rather than just patching the lock. The solution was more invasive but addressed the underlying design problem.

Opus 4.6 is still noticeably better at debugging with layered causality. K2.6 gets there, but needs more steering. Four debug rounds at $0.05 each with K2.6 costs less than one round with Opus at $0.75 — but you’re burning your own time instead of money.

Task 3: 500-line refactor (JavaScript, data transformation)

A 500-line data transformation module needed restructuring: extract reusable functions, improve naming, add TypeScript types, and split a 200-line monolithic function into composable units.

Kimi K2.6 handled this well. It correctly identified extraction boundaries, produced reasonable TypeScript interfaces, and broke the monolith into five well-named functions. One function had a redundant data pass that could have been eliminated, but the code compiled and passed tests.

Claude Opus 4.6 produced a nearly identical refactor. The extracted functions were named slightly better (more domain-specific rather than generic), and it caught the redundant data pass that K2.6 missed. The output was perhaps 5% better.

For refactoring, the gap is small enough that cost should drive the decision. K2.6 at $0.13 produces output that’s 95% as good as Opus at $0.75.

Where K2.6 still loses

Three patterns kept showing up across the 30 days:

Architectural decisions with tradeoffs. When asked “should I use microservices or a monolith for this 3-person startup,” K2.6 gives a balanced list of pros and cons but won’t push back on bad assumptions in your prompt. Opus 4.6 will tell you you’re over-engineering. This matters when you’re using the model as a thinking partner, not just a code generator.

Nuanced code review. K2.6 catches surface-level issues — unused imports, missing error handling, obvious logic bugs. It misses subtler problems: a function that’s technically correct but violates the module’s abstraction layer, or a test that passes but tests the wrong thing. Opus 4.6’s reviews read like they came from a senior engineer who’s been in the codebase for six months.

Long-horizon coherence. K2.6 has a 256K context window and generally holds coherence well. But in sessions exceeding 100K tokens with many back-and-forth turns, it occasionally loses track of earlier decisions. Opus 4.6’s 1M context window and stronger long-range attention mean it stays locked in across longer sessions.

A post on r/ClaudeAI from early May captures the sentiment well. In a thread with 1,471 upvotes discussing hybrid model routing strategies, one developer put it bluntly: K2.6 handles 80% of the work. The remaining 20% — the tasks that need Opus — are the ones where getting it wrong costs hours. Know which 20% you’re dealing with before you pick the model.

r/opencodeCLI saw a related thread the same week — 79 upvotes — where a developer described running both Kimi K2.6 and DeepSeek V4 alongside Claude, routing tasks based on complexity. The pattern is spreading because the economics are impossible to ignore.

A word on Claude Opus 4.7

Anthropic released Claude Opus 4.7 as their latest flagship, claiming a “step-change improvement in agentic coding.” Community reception has been mixed. Multiple threads on r/ClaudeCode and r/ClaudeAI report quality regressions on specific coding tasks compared to 4.6, particularly around instruction following and consistency.

This article compares against Opus 4.6 because the search data — 104 impressions for “kimi 2.6 vs opus 4.6” on Google Search Console — confirms that’s what developers are actually comparing. If you’re already on Opus 4.7 and happy with it, the pricing gap vs K2.6 is identical ($5/$25 per MTok), and the capability gap may be wider. If you’re on 4.6 and considering whether to upgrade to 4.7 or try K2.6, the cost math points strongly toward K2.6.

Access both via ofox

Both models are available through a single ofox API key. Swap the model ID and you’re done:

from openai import OpenAI

client = OpenAI(

api_key="your-ofox-key",

base_url="https://api.ofox.ai/v1"

)

# Kimi K2.6 — 7x cheaper, strong for structured coding

response = client.chat.completions.create(

model="moonshotai/kimi-k2.6",

messages=[{"role": "user", "content": "Build a rate-limited CRUD API in Go"}]

)

# Claude Opus 4.6 — deeper reasoning, edge-case awareness

response = client.chat.completions.create(

model="anthropic/claude-opus-4.6",

messages=[{"role": "user", "content": "Review this code for subtle concurrency bugs"}]

)If you’re doing heavy coding, try hybrid routing: structured tasks (API scaffolding, refactoring, boilerplate) go to K2.6. Complex reasoning (debugging, architecture, code review) goes to Opus 4.6. Quality where it matters, cost savings everywhere else.

The verdict: which model for which task

| Task type | Best pick | Why |

|---|---|---|

| API scaffolding / CRUD | Kimi K2.6 | Structured, pattern-driven — K2.6 is excellent here |

| Refactoring | Kimi K2.6 | 95% of Opus quality at 1/7 the cost |

| Boilerplate / file reading | Kimi K2.6 | Not worth paying Opus prices for this |

| Debugging (shallow) | Either | K2.6 is fine for stack traces and obvious bugs |

| Debugging (deep, multi-cause) | Claude Opus 4.6 | Opus traces layered causality better |

| Architecture review | Claude Opus 4.6 | Opus pushes back on bad assumptions |

| Code review (surface) | Kimi K2.6 | Catches the obvious stuff |

| Code review (nuanced) | Claude Opus 4.6 | Reads like a senior engineer reviewed it |

| Long sessions (100K+ tokens) | Claude Opus 4.6 | Stronger long-range coherence |

K2.6 covers roughly 80% of a developer’s daily coding tasks at one-seventh the cost. Paying Opus prices for CRUD scaffolding and boilerplate is leaving money on the table. The 20% where Opus 4.6 still earns its premium — complex debugging, architecture calls, nuanced code review — are the tasks where getting it wrong costs hours. The trick isn’t picking a winner. It’s knowing which 20% you’re in before you hit send.

Pricing source: Anthropic platform docs and Kimi platform docs, May 2026. Benchmark source: MoonshotAI official benchmarks published April 21, 2026. Exchange rate: 1 CNY ≈ 0.137 USD.