GPT-4o vs Claude Opus vs Gemini Pro: Production API Comparison for 2026

TL;DR: OpenAI’s GPT-4o excels at structured output and function calling, Anthropic’s Claude Opus 4.7 and Sonnet 4.6 lead on coding benchmarks and instruction-following, and Google’s Gemini models offer extended context capabilities at competitive pricing. The right choice depends on your specific workload — coding tasks favor Claude, structured output favors GPT-4o, and large-context processing favors Gemini. This guide compares all three flagship families across real-world production criteria to help you pick the right model.

Why This Comparison Matters Now

All three flagship model families are production-ready and available via a single API endpoint — the choice isn't about access anymore, it's about matching capability to cost for your specific workload.OpenAI’s GPT-4o, Anthropic’s Claude 4 series (Opus 4.7, Sonnet 4.6, Haiku 4.5), and Google’s Gemini models (1.5 Pro, 2.0 Flash) represent the current state of the art in production AI. Each provider has distinct strengths, and the pricing differences are significant enough to matter at production scale.

This review compares all three model families via ofox’s unified API gateway and gives you the decision framework to pick the right model without overpaying.

Pricing Comparison

Important: Specific per-token pricing varies by provider, usage tier, and changes frequently. For current rates, always check:

- OpenAI’s official pricing page for GPT models

- Anthropic’s pricing page for Claude models

- Google’s pricing page for Gemini models

General Cost Patterns (based on typical provider pricing structures as of early 2026):

| Model Family | Positioning | Typical Use Case |

|---|---|---|

| OpenAI GPT-4o | Premium pricing for performance-optimized inference | Latency-sensitive applications, structured output |

| Anthropic Claude Opus 4.7 | High-capability flagship | Complex reasoning, large codebases |

| Anthropic Claude Sonnet 4.6 | Balanced cost-performance | General-purpose production workloads |

| Google Gemini 1.5 Pro / 2.0 Flash | Competitive pricing for high-volume | Large-context processing, batch workloads |

Cost Optimization Considerations:

- Input vs output token ratios: Output tokens typically cost 3-10x more than input tokens

- Prompt caching: All three providers support caching, which can reduce costs by 50-90% for repeated prompts

- Context window usage: Some providers charge more for requests exceeding certain token thresholds

- Volume discounts: Enterprise tiers often provide significant discounts

For detailed cost optimization strategies, see How to Reduce AI API Costs.

Coding Performance: What the Benchmarks Show

SWE-Bench Verified measures whether a model can read a GitHub issue, understand a codebase, and submit a working fix. It’s one of the closest proxies we have to real software engineering work.

Current Landscape (based on public leaderboards as of early 2026):

Claude models (particularly Opus 4.7 and Sonnet 4.6) consistently rank among the top performers on SWE-Bench Verified and related coding benchmarks. These models excel at:

- Understanding complex codebases with cross-file dependencies

- Following detailed refactoring instructions

- Maintaining code style and conventions

- Handling edge cases in existing code

OpenAI’s GPT-4o demonstrates strong performance on:

- Structured code generation with specific output formats

- Function calling and tool use in coding contexts

- Rapid iteration on smaller code changes

- API integration and error handling

Google’s Gemini models show competitive performance on:

- Processing large codebases that fit within extended context windows

- Multimodal code understanding (code + diagrams + documentation)

- Batch processing of code analysis tasks

Important Note: Benchmark scores change frequently as models are updated. For the latest SWE-Bench results, check the official SWE-Bench leaderboard. For task-specific coding recommendations, see best AI model for coding 2026.

What This Means in Practice: The gap between top models is narrowing. For most production coding tasks, the difference in model capability matters less than:

- How well the model integrates with your development workflow

- Whether the model’s output format matches your needs

- The cost-performance tradeoff for your specific use case

Agentic and Multi-Step Tasks

Long-horizon agentic tasks require models to plan, execute multiple steps, handle errors, and recover without human intervention. This capability is measured by benchmarks like OSWorld (computer use) and similar multi-step evaluation frameworks.

Current Observations:

All three flagship model families demonstrate strong agentic capabilities, with different strengths:

OpenAI GPT-4o excels at:

- Structured tool calling and function execution

- Recovering from API errors with retry logic

- Following multi-step plans with clear intermediate outputs

- Integrating with existing agent frameworks (LangChain, LlamaIndex)

Anthropic Claude models excel at:

- Following complex, multi-constraint instructions across long workflows

- Maintaining context and consistency across extended agent sessions

- Reasoning about when to stop vs. continue a multi-step process

- Handling ambiguous situations where the next step isn’t obvious

Google Gemini models excel at:

- Processing large amounts of context to inform agent decisions

- Multimodal agent tasks (combining text, images, and other inputs)

- Parallel processing of multiple agent subtasks

When Agentic Capability Matters: If you’re building an agent that needs to autonomously complete tasks like “research competitors, draft a comparison table, and format it for presentation,” all three model families can handle it. The choice depends more on:

- Your existing infrastructure and SDK preferences

- Cost constraints for your expected request volume

- Whether your agent needs multimodal inputs or extended context

For detailed agent use cases and model selection, see best AI model for agents 2026.

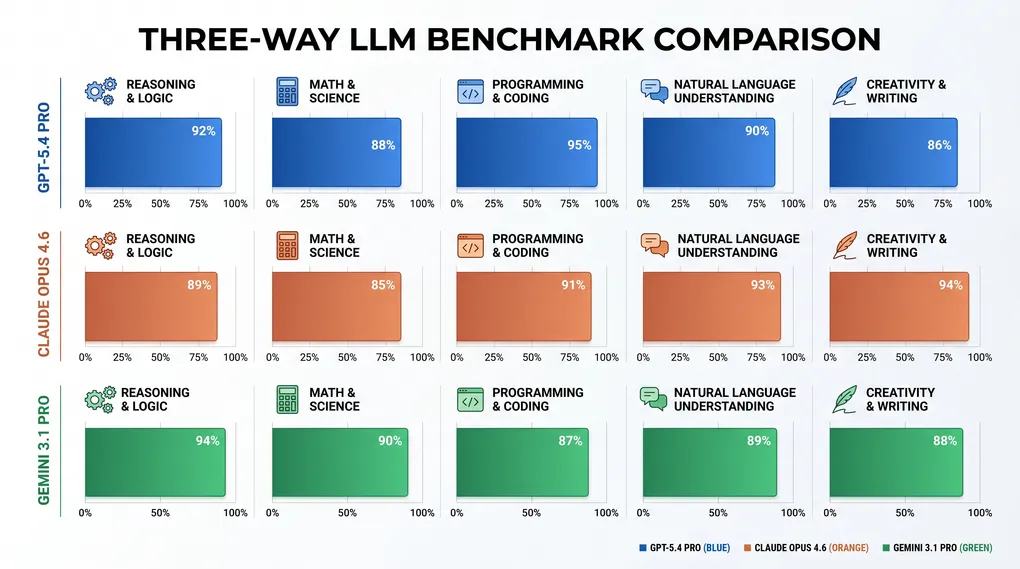

Reasoning Performance

GPQA (graduate-level science questions), MATH (competition mathematics), and MMLU (professional knowledge) test pure reasoning without code execution.

Current Landscape:

All three flagship model families demonstrate strong reasoning capabilities. Public benchmark leaderboards show competitive performance across providers, with scores varying by specific task type and evaluation methodology.

General Patterns:

- Claude models tend to excel at nuanced reasoning tasks requiring careful instruction-following

- GPT-4o demonstrates strong performance on structured reasoning with clear output formats

- Gemini models show competitive performance, particularly on tasks that benefit from extended context

The Practical Takeaway: For most production applications, the reasoning gap between flagship models is smaller than the coding or agentic capability gaps. All three model families are “good enough” for typical reasoning tasks.

What Matters More Than Benchmark Scores:

- How well the model follows your specific instructions and constraints

- Whether the model’s output format matches your downstream pipeline

- How the model handles edge cases unique to your domain

- The model’s failure modes and whether they’re acceptable for your use case

For the latest reasoning benchmark results, check:

- GPQA Leaderboard

- MATH Benchmark Results

- Model cards from OpenAI, Anthropic, and Google AI

Context Windows and Long-Context Performance

Context window size determines how much text you can send in a single request. This matters for RAG pipelines, document analysis, and long conversations.

Context Window Capabilities:

| Model Family | Context Window | Notes |

|---|---|---|

| Claude Opus 4.7 | 200,000 tokens | No surcharges, consistent pricing across full window |

| Claude Sonnet 4.6 | 200,000 tokens | No surcharges, consistent pricing across full window |

| OpenAI GPT-4o | 128,000 tokens | Check provider documentation for any tier-based pricing |

| Google Gemini 1.5 Pro | 1,000,000+ tokens | Largest context window, no surcharges |

| Google Gemini 2.0 Flash | 1,000,000+ tokens | Fast inference with extended context |

Long-Context Use Cases:

- Multi-turn conversations: All three providers handle typical chat sessions (10K-50K tokens)

- Document analysis: Claude and Gemini excel at processing long documents (50K-200K tokens)

- Codebase analysis: Gemini’s extended context is ideal for processing entire repositories (200K+ tokens)

- RAG systems: All three support large context windows for stuffing retrieved documents

Performance Considerations:

- All three providers maintain reasoning quality across their context windows

- Retrieval accuracy can degrade slightly for information buried in the middle of very long contexts (the “lost in the middle” problem)

- For best results, place critical information at the beginning or end of your prompt

- Consider whether you actually need the full context window — most production workloads use under 10K tokens

Cost Implications:

- Some providers charge more for requests exceeding certain token thresholds

- Verify pricing tiers on official provider pages before committing to long-context workloads

- Prompt caching can significantly reduce costs for repeated long-context requests

Speed and Latency: Real-World Performance

Response latency matters for user-facing applications. Performance characteristics vary based on:

- Geographic region and network conditions

- Request complexity and token count

- Current provider load and capacity

- Whether you’re using streaming vs. non-streaming responses

General Performance Patterns:

OpenAI GPT-4o: Optimized for low latency and high throughput. Typically delivers fast time-to-first-token, making it suitable for interactive applications where sub-second response time is critical.

Anthropic Claude models: Balanced performance profile suitable for most production workloads. Streaming responses provide good perceived performance for user-facing applications.

Google Gemini models: Performance varies with context size. Gemini 2.0 Flash is optimized for speed, while Gemini 1.5 Pro prioritizes capability over raw speed.

Measuring Latency in Your Environment:

Latency varies significantly based on your specific setup. To get accurate measurements:

- Test in your target geographic region

- Use realistic prompt sizes and complexity

- Measure during your expected peak usage times

- Test with streaming enabled (if your application uses it)

For Interactive Applications: If sub-second response time is critical (chatbots, code assistants, live suggestions), test all three providers in your target region to measure actual latency. The fastest model on paper may not be fastest for your specific use case.

For Batch Processing: Latency differences matter less when processing documents or background jobs. Focus on cost and context window instead.

When to Pick Each Model Family

Pick OpenAI GPT-4o when:

- You need fast, reliable structured output (JSON, function calls, tool use)

- Your application requires low latency for interactive use cases

- You’re building on existing OpenAI-compatible infrastructure

- Structured output and function calling are critical to your workflow

- You need strong ecosystem integration with agent frameworks

Best for: Chatbots, code assistants with function calling, real-time agents, applications requiring structured JSON output

Pick Anthropic Claude (Opus 4.7 or Sonnet 4.6) when:

- The task involves writing, refactoring, or debugging code

- You need strong instruction-following with complex, multi-constraint prompts

- You want excellent cost-performance balance (especially Sonnet 4.6)

- Your workload fits within 200K context (most do)

- Code quality and reasoning depth matter more than raw speed

Best for: Code generation and review, content writing, document analysis, complex reasoning tasks, general-purpose production assistants

Pick Google Gemini when:

- You need extended context processing (>200K tokens)

- Your application requires multimodal inputs (text + images + documents)

- You’re running high-volume workloads where cost per token is critical

- You need to process entire codebases or very long documents

- Fast inference with large context is important

Best for: Document analysis, codebase search, RAG pipelines with large context, batch processing, multi-tenant applications, multimodal tasks

How to Access All Three with One API Key

ofox provides a unified OpenAI-compatible API at https://api.ofox.ai/v1. One API key, one endpoint, switch models by changing the model parameter:

from openai import OpenAI

client = OpenAI(

api_key="sk-your-ofox-key",

base_url="https://api.ofox.ai/v1"

)

# Use Claude Sonnet 4.6

response = client.chat.completions.create(

model="claude-sonnet-4-6",

messages=[{"role": "user", "content": "Your prompt"}]

)

# Switch to GPT-4o

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "Your prompt"}]

)

# Or use Gemini 1.5 Pro for large-context tasks

response = client.chat.completions.create(

model="gemini-1.5-pro",

messages=[{"role": "user", "content": "Your prompt"}]

)Every OpenAI SDK parameter works identically: temperature, max_tokens, tools, stream. No new SDK to learn. For migration details, see OpenAI SDK migration guide.

Available Models via ofox.ai:

- OpenAI:

gpt-4o,gpt-4o-mini, and other GPT models - Anthropic:

claude-opus-4-7,claude-sonnet-4-6,claude-haiku-4-5 - Google:

gemini-1.5-pro,gemini-2.0-flash, and other Gemini models

For the complete list of available models and their identifiers, check ofox.ai/models.

The Practical Workflow: Start with Balance, Optimize Later

Most teams waste money by defaulting to the most expensive model or the wrong model for their use case. The efficient approach:

- Start with a balanced model like Claude Sonnet 4.6 for your task

- Build an eval suite that measures success rate on real examples from your domain

- Test alternatives if the initial model doesn’t meet your quality or latency requirements

- Optimize based on actual data, not assumptions about which model is “best”

Multi-Model Strategy:

You don’t have to pick just one model. Many production applications use multiple models for different tasks:

Routing by Task Type:

- Simple queries → Cost-efficient model (Gemini Flash, Claude Haiku)

- Medium complexity → Balanced model (Claude Sonnet, GPT-4o)

- High complexity → Flagship model (Claude Opus, GPT-4o for structured output)

Routing by Context Length:

- Short context (<10K tokens) → Any model based on other requirements

- Medium context (10K-100K tokens) → Claude or GPT-4o

- Very long context (>100K tokens) → Gemini 1.5 Pro or 2.0 Flash

Fallback Strategy:

- Primary: Your preferred model for the task

- Fallback 1: Alternative model if primary is rate-limited or unavailable

- Fallback 2: Third option for maximum reliability

This pattern reduces API spend by 40-70% without sacrificing quality on tasks that need flagship capability. For the full cost optimization framework, see how to reduce AI API costs.

Benchmark Caveats: What the Numbers Don’t Tell You

Benchmarks measure specific tasks under controlled conditions. They don’t capture:

- How well the model follows your specific instructions and style

- Whether the model’s output format matches your downstream pipeline

- How the model handles edge cases unique to your domain

- Whether the model’s failure modes are acceptable for your use case

- Real-world performance under your specific latency and cost constraints

The only benchmark that matters is your own eval suite on your actual tasks. Use public benchmarks to narrow the candidate set, then test on real workloads before committing.

Building Your Own Eval Suite:

- Collect 20-50 representative examples from your actual use case

- Define clear success criteria (not just “looks good”)

- Test all candidate models on the same examples

- Measure success rate, latency, and cost per request

- Pick the model that meets your quality bar at the lowest cost

This approach prevents overpaying for capability you don’t need and ensures the model actually works for your specific requirements.

Conclusion: Match Model to Workload, Not Hype

For production workloads, Claude models deliver excellent coding performance and instruction-following, GPT-4o excels at structured output and low latency, and Gemini models handle extended context at competitive pricing — pick based on your specific requirements, not marketing claims.The best approach for most teams:

- Start with Claude Sonnet 4.6 for balanced cost-performance on general tasks

- Use GPT-4o for latency-sensitive applications requiring structured output

- Use Gemini models for large-context processing or high-volume workloads

- Build evals to measure actual performance on your tasks

- Route intelligently between models based on task complexity and requirements

The best part: you don’t have to commit to one model. ofox’s unified API lets you route tasks to the right model based on complexity, switch models mid-project as requirements change, and optimize cost without rewriting code.

All three model families are available via ofox.ai with a unified OpenAI-compatible API. Sign up for a free account to test all three with $5 in free credits.