LLM API Cache Hit Math: Why Your DeepSeek Bill Says $4 But the Pricing Says $50

The official DeepSeek V4 Flash price is $0.14 per million input tokens. The bill on a real coding workload is closer to $0.005. The 28x gap is cache hit math, and almost nobody calculates it correctly. Here is the worked math across DeepSeek, Claude, and OpenAI in 2026.

Claude Opus 4.6 vs GPT-5.5 vs Gemini 3.1 Pro: Reasoning Benchmarks (3 Real Tasks Tested)

Three reasoning tasks, three frontier models, one weekend of side-by-side runs. Opus 4.6 still leads on careful step-by-step logic, GPT-5.5 wins on speed-of-correct-answer, and Gemini 3.1 Pro delivers ~70% of the quality at one-third the price. Here is the breakdown — including why we tested 4.6 instead of 4.7.

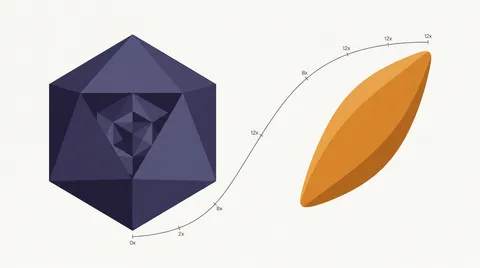

DeepSeek V4 Pro vs Flash: 3 Tasks, 100M Tokens, Real Cost-Quality Tradeoff

V4 Flash is 12x cheaper than V4 Pro and close enough on bounded coding work that the gap rarely shows up. We break down where it does — three task types where Flash quietly fails, the cache-hit math behind the headline price, and the rule of thumb that decides which model to pick.

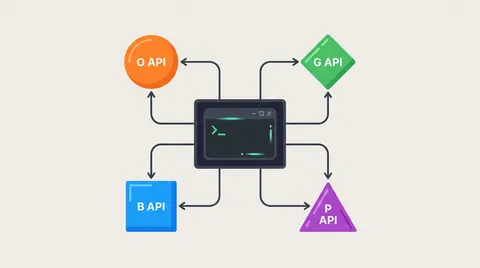

How to Switch Claude Code Backend in 2026: DeepSeek, OpenAI, OpenRouter, or Custom API in 60 Seconds

Two env vars, one /status check, 60 seconds total. Exact ANTHROPIC_BASE_URL configs for DeepSeek, ofox, OpenAI, and OpenRouter — plus the 4 protocol pitfalls that silently break tool calls and extended thinking.

Why Claude Max Users Are Leaving in May 2026: A Data-Driven Look at the Throttling Backlash

Anthropic confirmed peak-hour throttling, two cache bugs that inflate token bills 10–20×, and a v2.1.100 client that burns 40% more tokens. Here is what actually happened to Claude Max plans between March 23 and May 6, told through GitHub issues, Reddit threads, and the May 6 reversal announcement.

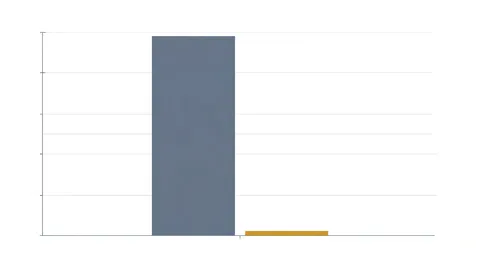

Replacing Claude with DeepSeek V4 in Claude Code: 30-Day, 100M-Token Cost & Quality Test

A real-world cost comparison: DeepSeek V4 Flash costs $0.14/M input vs Claude Opus 4.6 at $5/M. At 100M tokens/month, that's $10.52 vs $848 with cache — a 98.7% cost reduction. But token math isn't the whole story.

Kimi K2.6 vs Claude Opus 4.6: 30-Day Coding Benchmark (10x Cheaper, 80% as Good?)

Kimi K2.6 costs roughly 7x less per token than Claude Opus 4.6 while scoring within 3-5 points on every major coding benchmark. After 30 days of testing across REST API builds, debug sessions, and 500-line refactors, here is where each model wins — and where K2.6 still falls short.

AI Video Generation APIs Compared: Sora 2 Pro vs Veo 3.1 vs Kling 2.6 Pro (2026)

Hands-on comparison of the three leading video generation APIs — OpenAI's Sora 2 Pro, Google's Veo 3.1, and Kuaishou's Kling 2.6 Pro. Capabilities, pricing, audio, resolution, and which fits your pipeline.

How to Cut Claude Code Costs by 80% with Hybrid Model Routing (The Pattern Top Devs Use in 2026)

Tracked where Claude Code tokens actually go for a week — 85% don't need Opus. Here's the hybrid routing setup that replaced a $200 Max plan with $30/month, covering ofox, OpenRouter, LiteLLM, DeepClaude, and DIY proxy.

GPT-5.5 Instant Lands: New ChatGPT Default Model, Hallucinations Down 52.5% in High-Stakes Domains

OpenAI swapped ChatGPT's default model for GPT-5.5 Instant on May 5. API alias chat-latest. 52.5% fewer hallucinations on medicine/law/finance prompts, 30.2% shorter answers. It is not the same as April's GPT-5.5 flagship. Here is what changed and whether to migrate now.

Kling 2.6 Pro Video API: Complete Developer Guide (2026)

Practical developer guide to Kuaishou's Kling 2.6 Pro video generation via Replicate — text-to-video, image-to-video, native audio with lip-synced dialogue, and pricing breakdown.