Images API

Two endpoints: Generate (text → image) and Edit (image + text → image). Both responses follow the OpenAI standard shape data[0].b64_json.

Gemini image models (e.g. google/gemini-3.1-flash-image-preview) on this endpoint only support generation, not editing. For editing, use the Gemini native protocol.

Generate images

POST https://api.ofox.ai/v1/images/generationsWith gpt-image-2

Python

import base64

from openai import OpenAI

client = OpenAI(api_key="YOUR_OFOX_API_KEY", base_url="https://api.ofox.ai/v1")

resp = client.images.generate(

model="openai/gpt-image-2",

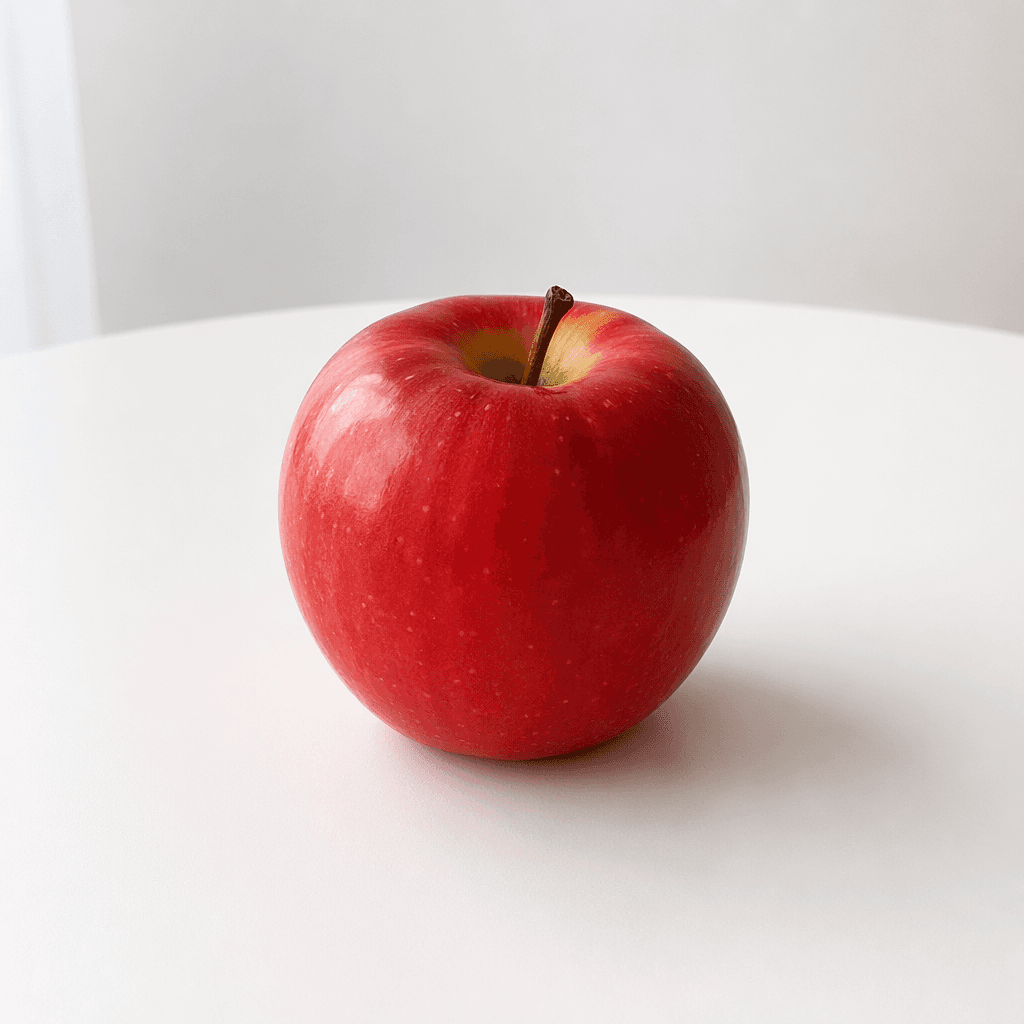

prompt="A simple red apple on a white table",

size="1024x1024",

quality="low",

output_format="png",

)

with open("output.png", "wb") as f:

f.write(base64.b64decode(resp.data[0].b64_json))Sample output:

With gemini-3.1-flash-image-preview

The same endpoint also accepts Gemini image models. Do not pass n — the gateway maps n incorrectly to the numberOfImages field and returns 400. Each call produces exactly one image.

Python

import base64

from openai import OpenAI

client = OpenAI(api_key="YOUR_OFOX_API_KEY", base_url="https://api.ofox.ai/v1")

resp = client.images.generate(

model="google/gemini-3.1-flash-image-preview",

prompt="A simple red apple on a white table, photorealistic",

size="1024x1024",

quality="low",

output_format="png",

)

with open("output.png", "wb") as f:

f.write(base64.b64decode(resp.data[0].b64_json))Sample output:

Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

model | string | ✅ | openai/gpt-image-2, google/gemini-3.1-flash-image-preview |

prompt | string | ✅ | Natural-language description |

quality | string | ✅ | auto / low / medium / high / standard / hd |

n | number | — | 1–10, default 1. Not supported by Gemini models |

size | string | — | auto / 1024x1024 / 1536x1024 / 1024x1536 / 256x256 / 512x512 / 1792x1024 / 1024x1792 |

output_format | string | — | png / jpeg / webp |

background | string | — | transparent / opaque / auto |

stream | boolean | — | Default false |

Response

{

"created": 1777385517,

"data": [

{ "b64_json": "<image Base64>", "index": 0 }

],

"model": "openai/gpt-image-2",

"size": "1024x1024",

"quality": "low",

"usage": {

"input_tokens": 14,

"input_tokens_details": { "text_tokens": 14 },

"output_tokens": 208,

"total_tokens": 222

}

}The image lives in data[0].b64_json — base64-decode and save it.

Edit images

POST https://api.ofox.ai/v1/images/editsmultipart/form-data — you must upload an image file.

This endpoint only supports OpenAI / Azure OpenAI models. Calling it with google/gemini-3.1-flash-image-preview returns Image editing is not supported for model — switch to editing via the Gemini native protocol.

Call

Python

import base64

from openai import OpenAI

client = OpenAI(api_key="YOUR_OFOX_API_KEY", base_url="https://api.ofox.ai/v1")

with open("apple.png", "rb") as f:

resp = client.images.edit(

model="openai/gpt-image-2",

image=f,

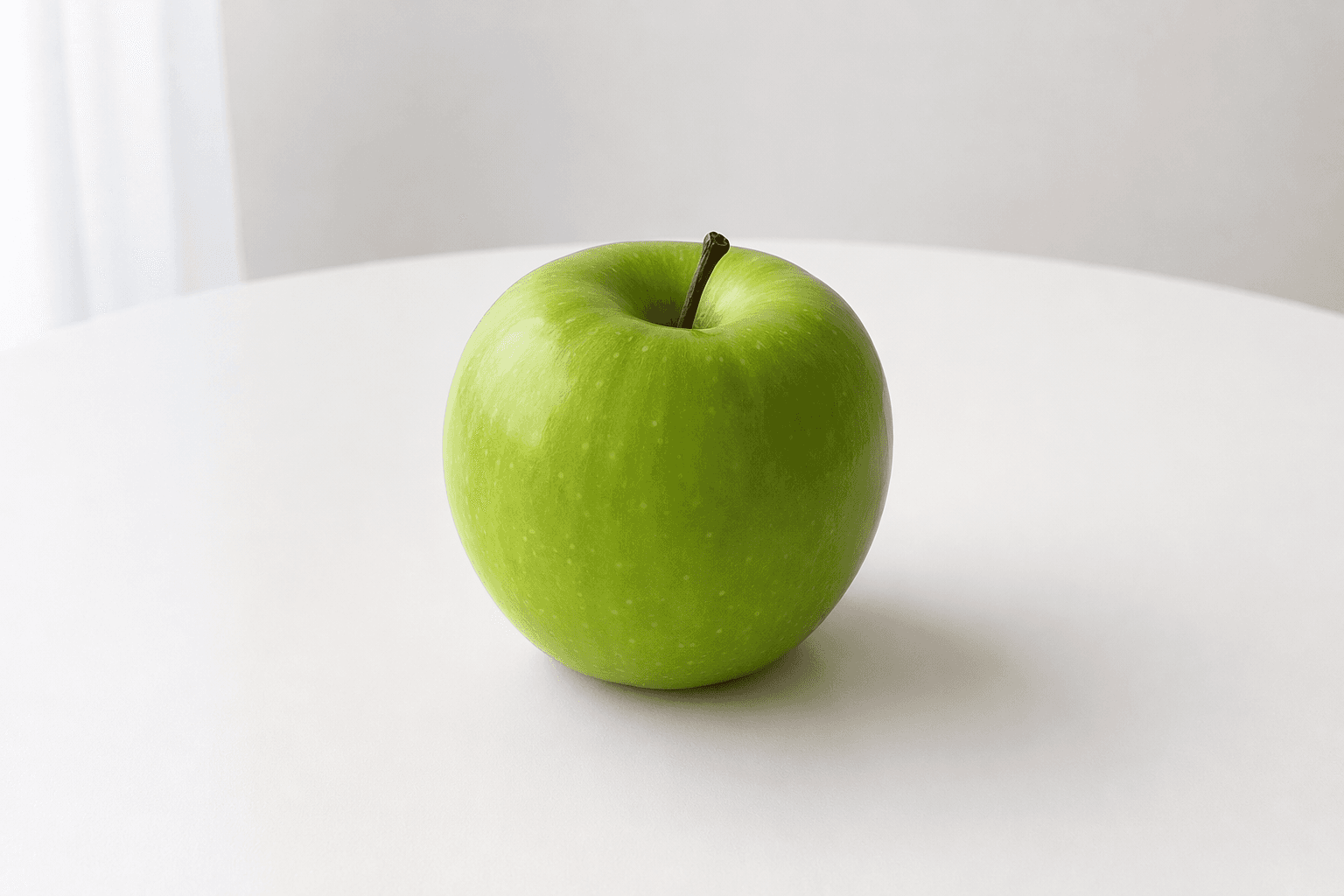

prompt="把苹果改成绿色,其他保持不变",

size="auto",

quality="low",

)

with open("apple_edited.png", "wb") as out:

out.write(base64.b64decode(resp.data[0].b64_json))The image field takes a local file path (with the @ prefix in cURL), not a URL.

Before / after:

| Original | Edited |

|---|---|

|  |

Parameters

| Parameter | Type | Required | Description |

|---|---|---|---|

model | string | ✅ | Recommended: openai/gpt-image-2 |

image | file | ✅ | PNG / JPEG file |

prompt | string | ✅ | Edit instruction |

quality | string | ✅ | low / medium / high |

n | number | — | Default 1 |

size | string | — | auto keeps the original size |

Response

Same shape as generation:

{

"created": 1777385669,

"data": [

{ "b64_json": "<edited image Base64>", "index": 0 }

],

"model": "openai/gpt-image-2",

"size": "auto",

"quality": "low",

"usage": {

"input_tokens": 1041,

"input_tokens_details": { "image_tokens": 1024, "text_tokens": 17 },

"num_input_images": 1,

"output_tokens": 358,

"total_tokens": 1399

}

}usage.input_tokens_details.image_tokens is the tokens consumed by the input image; num_input_images is the input image count.

See supported models and pricing in the Model Catalog .